Benefits of Code Coverage

Quality in Software

Increasing the level of quality in software can be accomplished in different ways and during various stages of the software development process. QA teams are responsible for testing User Interfaces, checking for defect detection as well as usability, and most importantly, that the resulting software conforms to that of the requirements. At a different stage of the process, developers are responsible for making sure that components also conform to the specification requirements as well as testing for their correct functionality.

Although very similar in goals, the means used in each scenario can vary. In QA, the process is often manual, where engineers are going through a series of use-case scenarios and try and discover any potential issues. At the lower code level, with an ever increasing tendency of using unit testing, code is tested in automated ways, also offering the possibility of regression testing. In addition, if techniques such as Test Driven Development or Behavior Driven Development are used, there is a promise that only code that has an associated test should be written.

Still, we often find systems with large amounts of code that is not covered by any type of test. This leads to defects occurring in production. By defects, we refer to incorrect behavior due to programming errors, and not misinterpretations of requirements. Often these issues are edge cases or cases where assumptions where made by the developer that a certain piece of code was working correctly, when in reality that code might not even have run. It does not matter how competent QA engineers are or how well disciplined coders are, there is always a high probability of things like this occurring. How do we try and solve the problem the best way possible without incurring in high costs?

Code Coverage

The problem stems from the fact that we have parts of our code not covered by any type of test which can lead to potential defects in production.

Trying to locate these parts manually, be it a class, module or several lines of code, can be near impossible and certainly not cost effective. Code Coverage tools on the other hand allow us to perform this type of analysis in an automated and simple way. By running profilers on our systems, coverage tools analyze our code to see what parts of it have been called from tests, giving us an overall percentage of the amount of code covered. The higher that percentage, the less chances of encountering defects in production.

dotCover as a Coverage Tool

In order for a code coverage tool to be effective, it has to be flexible enough to adapt to the reality of software systems in existence. This reality is that there is a high percentage of existing applications that do not have any type of automated testing in place. As such, coverage tools should provide the possibility of profiling applications for coverage by either running a series of automated unit tests or allowing a manual execution of the application, much like that which is performed by QA engineers.

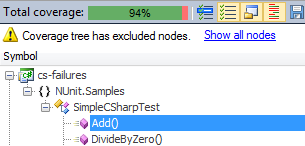

With dotCover, we get both these possibilities. By running dotCover on our unit tests, we can examine how much of our code is actually covered by these tests. dotCover, as opposed to Microsoft Visual Studio Coverage, provides the ability to work with many unit testing frameworks, and not only MSTest. This offers a great advantage to developers who prefer using more sophisticated and feature rich testing frameworks such as NUnit, MSpec or xUnit.

In addition to analyzing coverage via tests, one of the key benefits of dotCover is its ability to examine code coverage based on the execution of the application. That is, a QA engineer could execute a use-case scenario and examine what parts of the code where actually executed during this use-case.

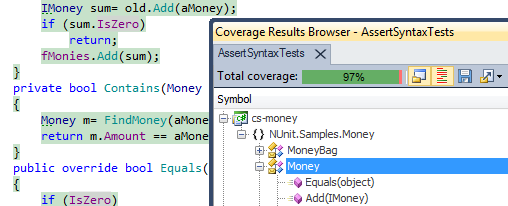

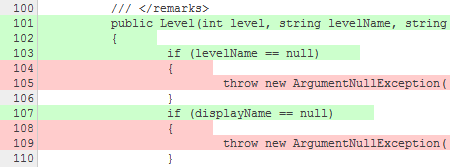

Both of these offer invaluable information. By providing a report of the exact lines of code that are executed during a test run, developers can detect sections of code that they presumed had been executed and in effect had not. This level of insight is by far the most valuable aspect of a coverage tool. By simplifying this process, we drastically reduce the cost involved in trying to locate edge cases and avoid problems in production.

While the percentage of code coverage indicates to us the level of coverage by our code, the insight into the exact executed lines provides with invaluable information on potential defects.

Seamless Integration within IDE and Continuous Integration Environments

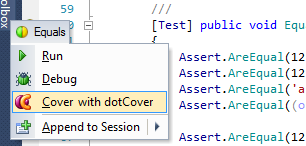

Code Coverage should be an effortless and painless process. The easier it is to work with it, the more effective it will be. dotCover integrates with Visual Studio and ReSharper, offering single-click coverage on entire assemblies, entire test fixtures or single tests.

The coverage analysis and information is provided in-place without requiring developers to switch to any other external tools.

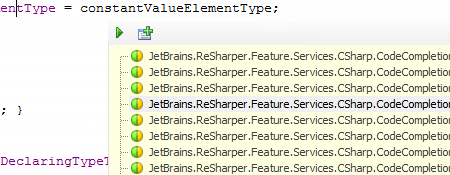

dotCover also offers the ability of locating tests that are impacted by certain lines of code.

When it comes to setting up Continuous Integration environments, JetBrains TeamCity has first-class integration with dotCover, offering a bundled free version, allowing for coverage reports without much effort.

Support for multiple application platforms

dotCover is one of the few coverage tools that work with a variety of different types of applications. Based on the same profiling engine as dotTrace Performance, dotCover provides support for standalone applications, Siverlight, Web applications and services among others. This allows QA engineers to perform coverage analysis independently on the type of system.

Although Code Coverage tools cannot solve the issues associated with incorrect behavior of software systems due to misunderstanding or misinterpretation of requirements, they do provide a very efficient way to minimize defects which originate from programming errors by making the detection process cost-effective and simple.