Import/Export options

Import CSV

Import data from file

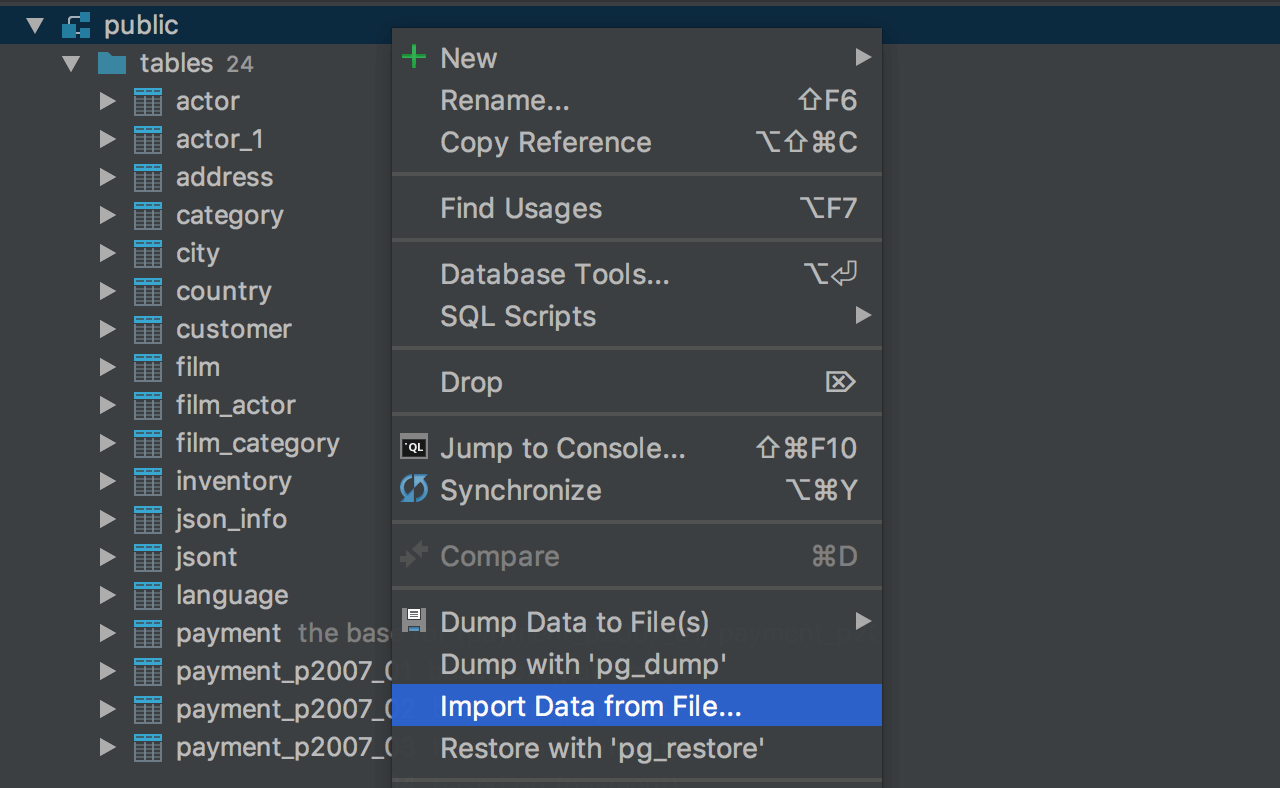

There is a dedicated UI for importing DSV (CSV and TSV) files to the database. Click the schema you wish to import data to, and choose Import From File… from the context menu. Then select the CSV file where your data is stored.

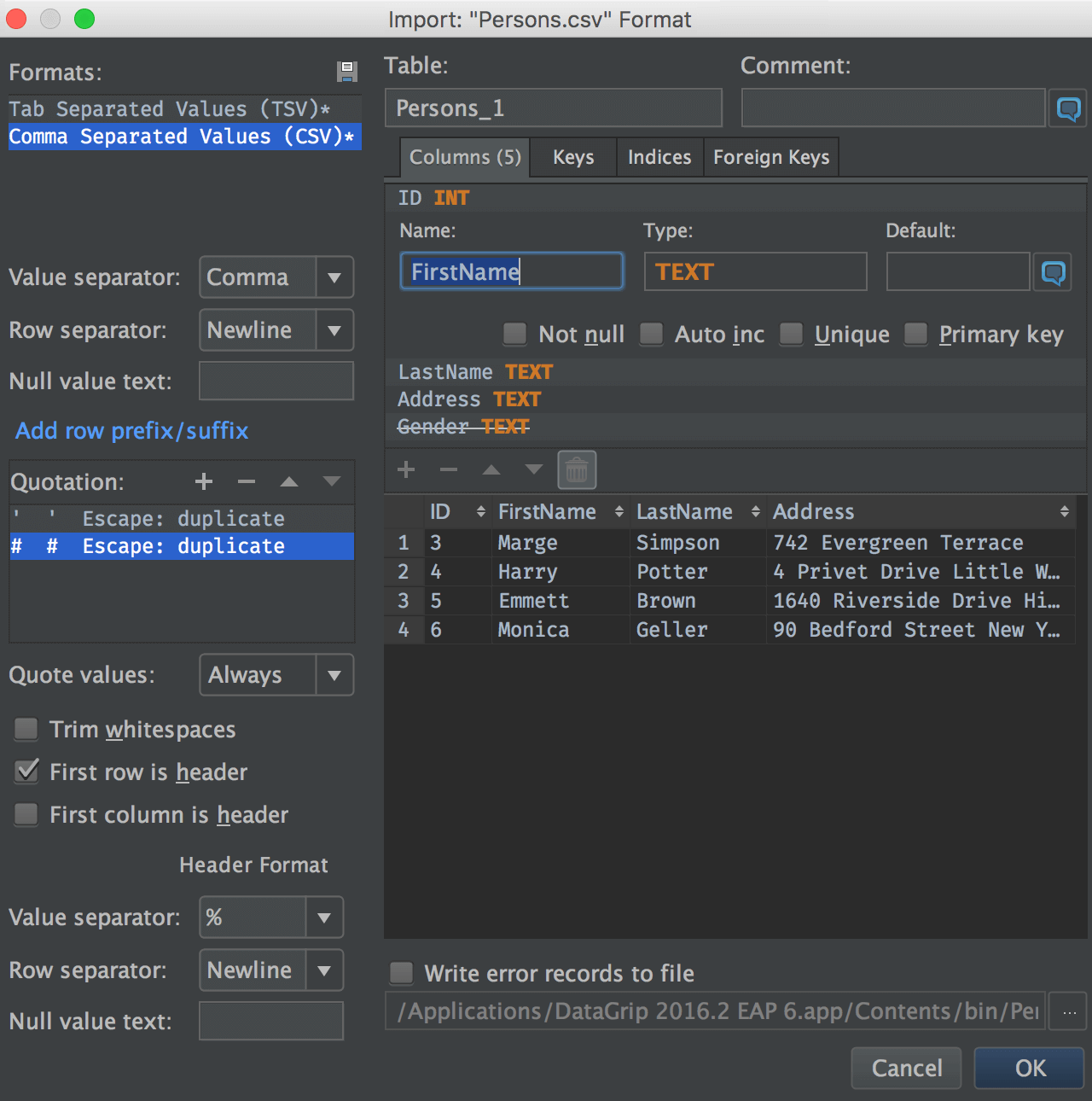

You will see the Import dialog window. The left-hand panel is for format specification:

choose the delimiter, if the first row is the header (the separate format options are

available for it), and specify if you have quoted values in the file.

On the right-hand side, you see the frame describing the table to be created and the

result

data

preview. Press Delete to remove a column from the result.

If

you want to import data from an existing table, just use the context menu of this

particular

table to choose Import From File…

What happens if there are errors in the file? A write error records to file option is available. The import process will not be interrupted, but all the wrong lines will be recorded in this file.

Paste CSV to the data editor

Paste data from Excel tables. Generally to do this, you need the ability to paste data in a DSV format. In DataGrip you can define any format you want, or you can let the IDE detect the format automatically: Gear icon → Paste format.

CSV editor

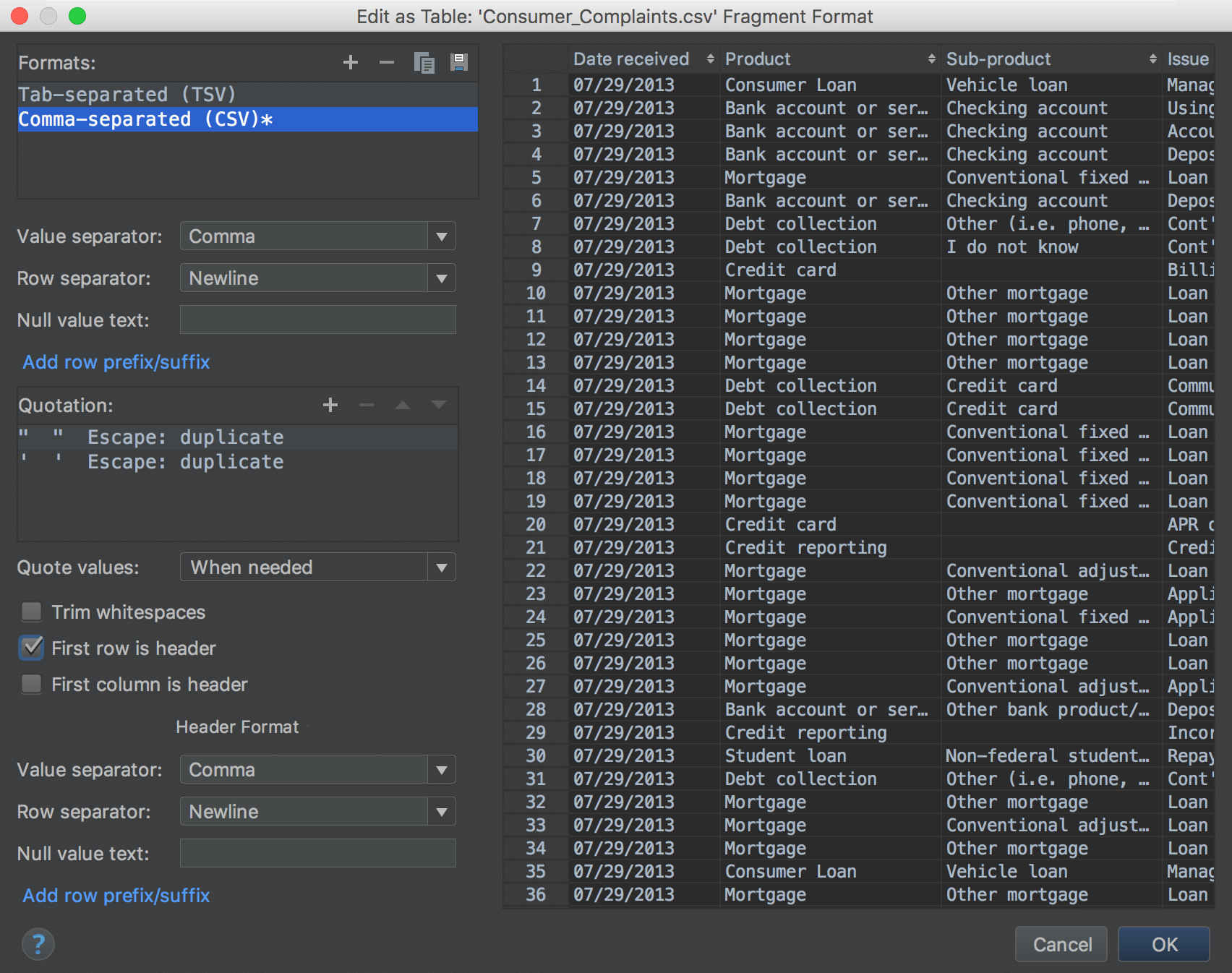

DataGrip can edit CSV files as tables. Click on Edit As Table in the context menu.

Then choose the delimiter, specify if the table has a header, and when to quote values, etc. An instant table preview is available.

Export data

What and where

Table

A table can be exported to:

— File. Context menu of a table → Dump data to file.

— Another existing table. Context menu of a table → Copy Table to (or

just

F5 on a table) → Choose existing table.

— New table in any data source of any database vendor. Context menu of a table

→

Copy

Table to (or just F5 on a table) → Choose target schema. Or just drag-n-drop it

there!

This gif illustrates how a PostgreSQL table can be copied to a SQLite data

source.

SQL query

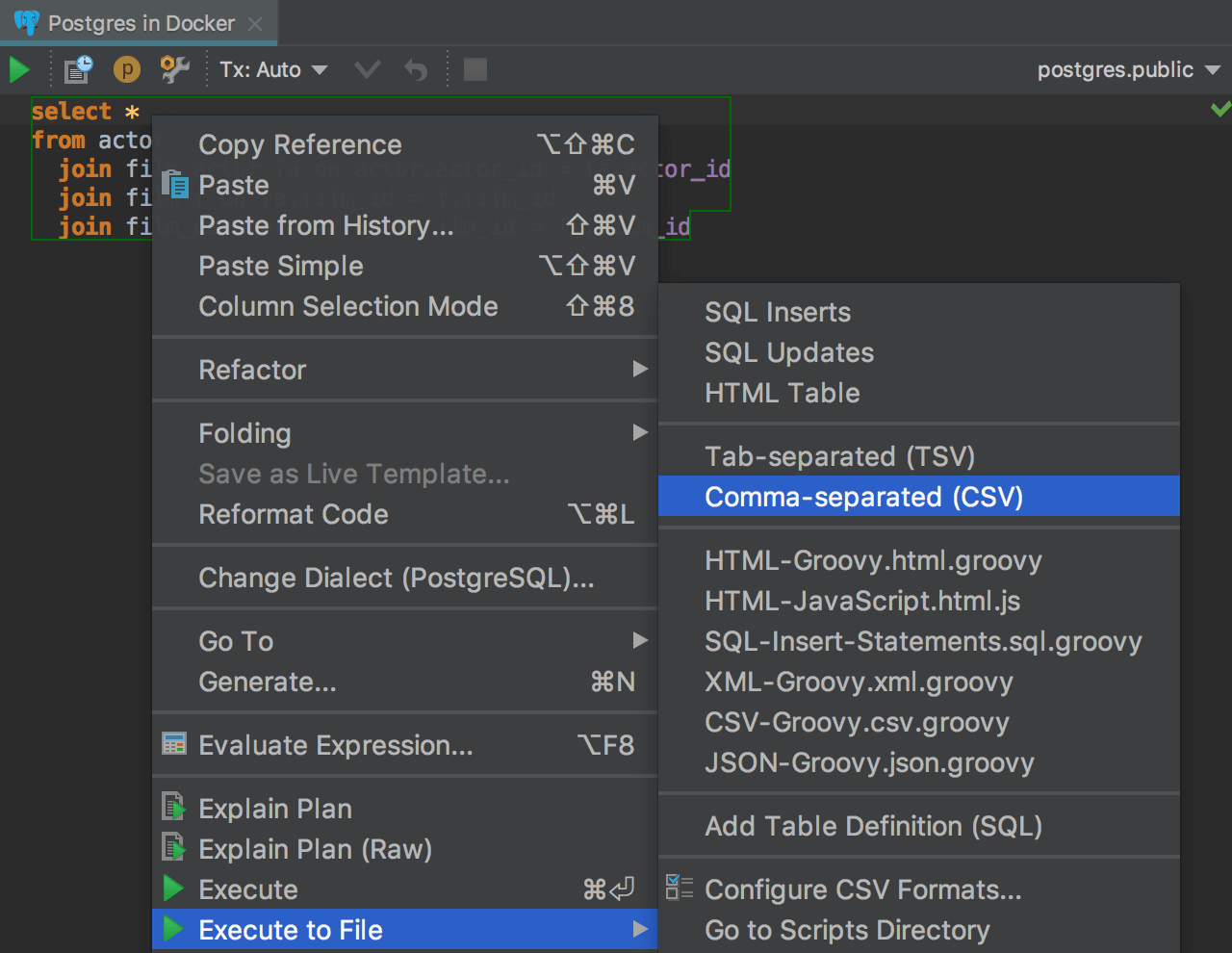

SQL query can be exported to a file as a result. Context menu on a statement → Execute to file → Choose the format. It is useful if the query is slow, if you export the result-set, the query will run again. In this case you run it only once.

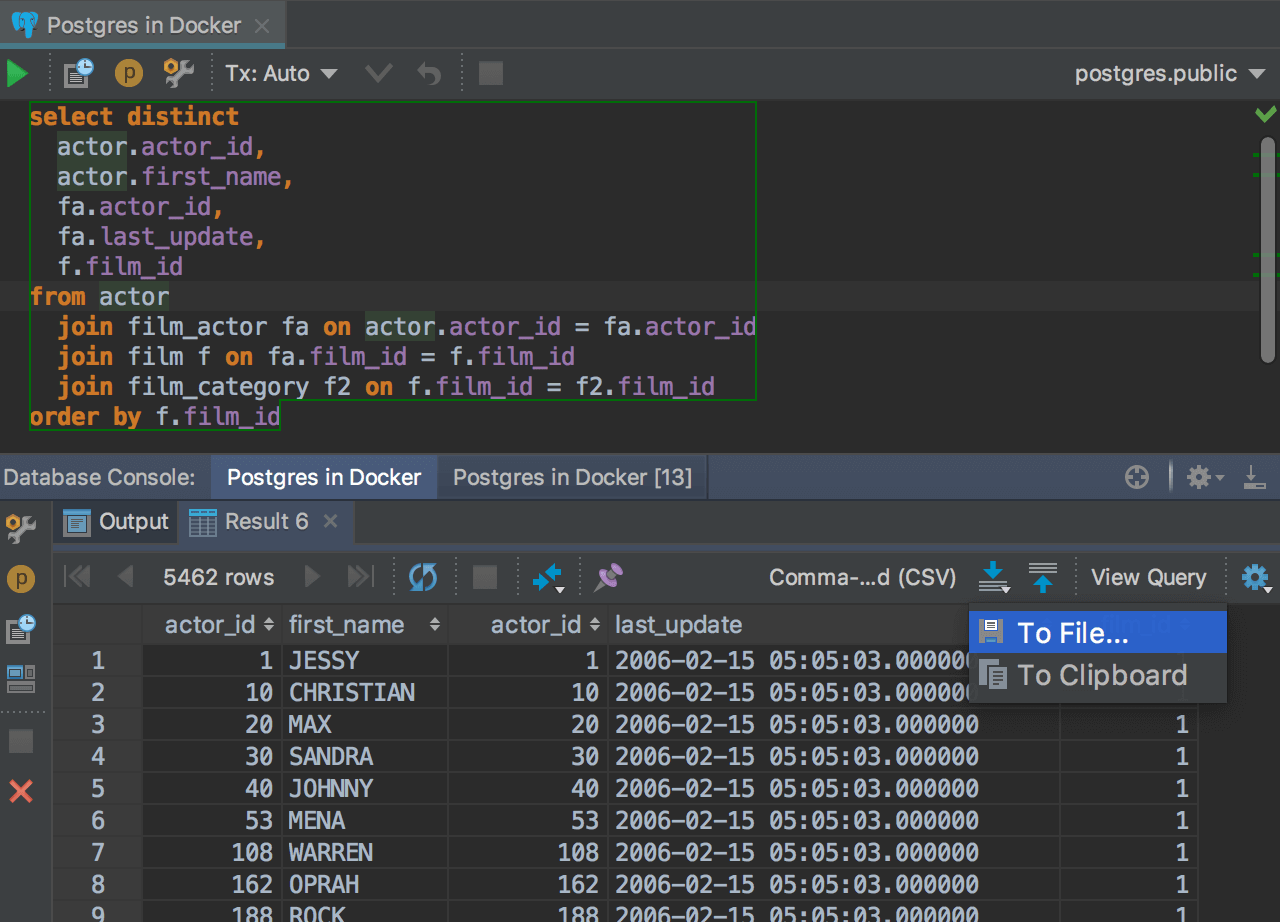

Result-set

A result-set can be exported to:

— File. Dump button ![]() → To

File.

→ To

File.

— Clipboard. Dump button ![]() → To

Clipboard.

→ To

Clipboard.

— Another existing table. Export button ![]() → Choose existing table.

→ Choose existing table.

— New table in any data source of any database vendor. Export button ![]() → Choose target

schema.

→ Choose target

schema.

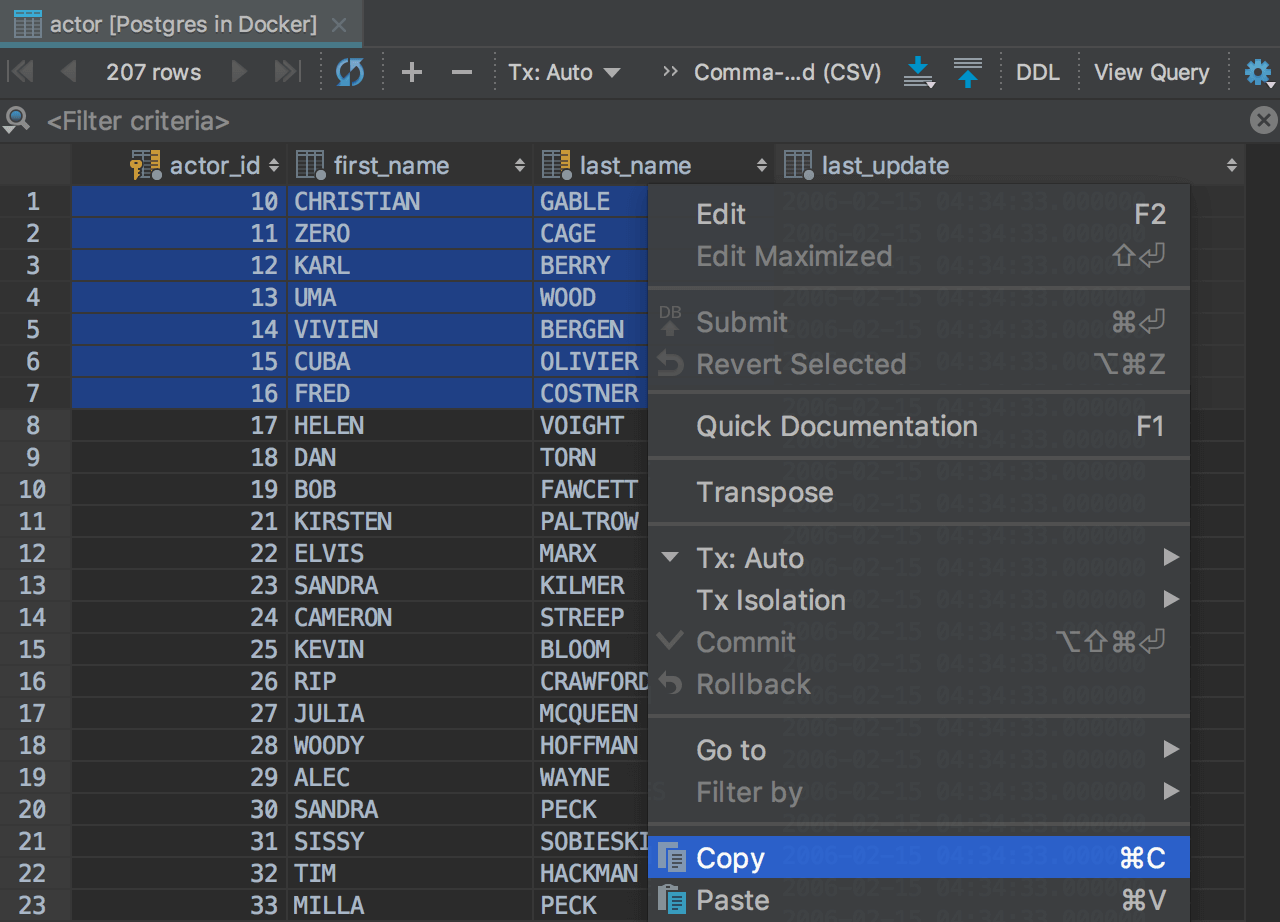

Selection

A selection in the data editor can be copied to the clipboard: it can be done in the usual way with Context menu → Copy or Ctrl+C.

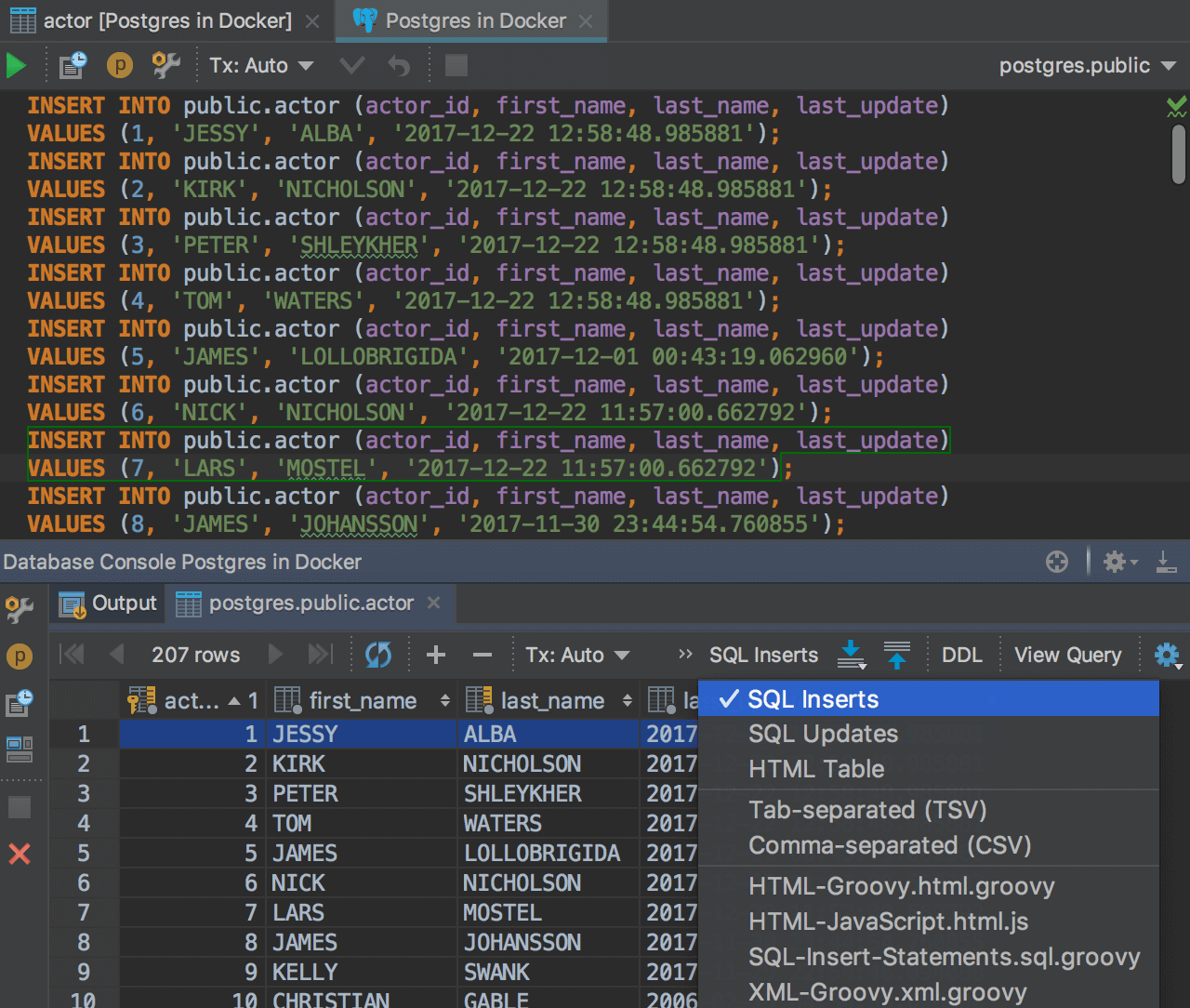

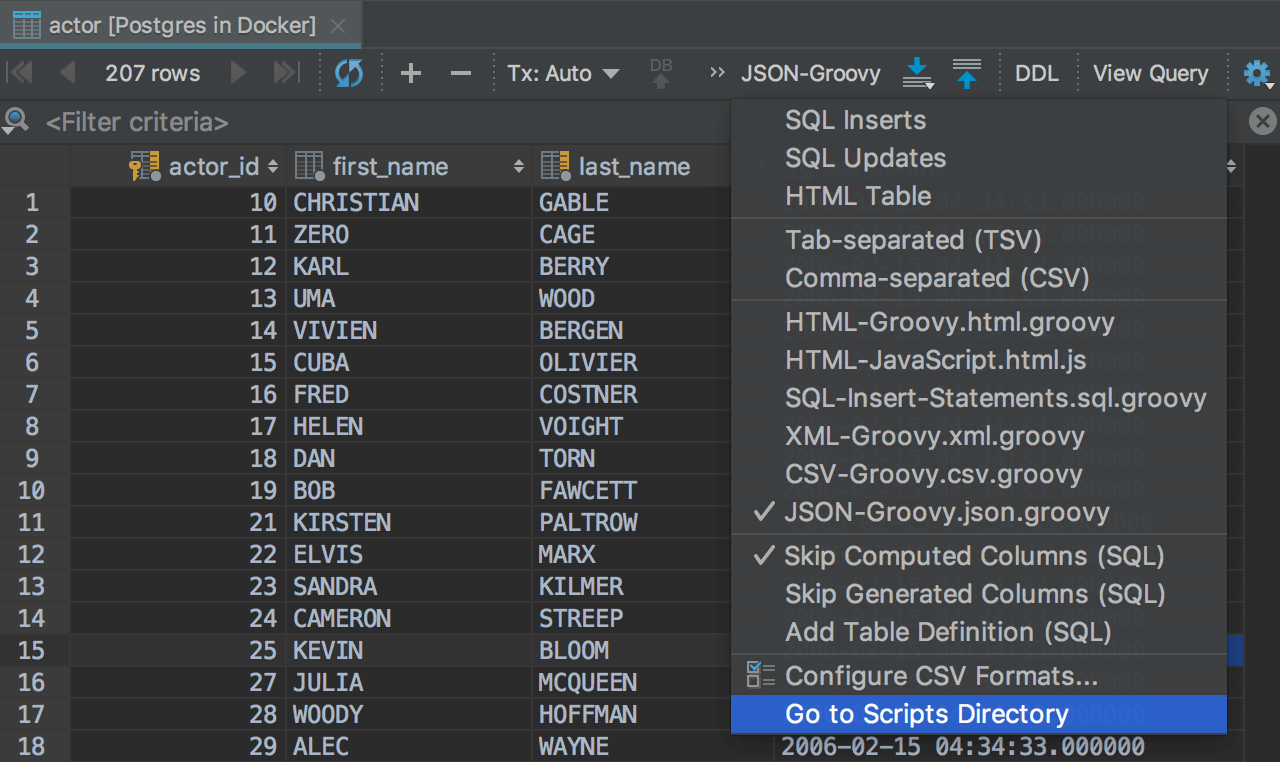

Export formats

Every time you export to a file or to the clipboard, you need to choose a format. Usually, it is CSV, but in many cases you need JSON, XML or even DML statements. Choose the appropriate Data Extractor from the drop-down list. Or create your own export format.

SQL queries

Any table or result-set can be presented

as a batch of INSERT statements. To do this, choose SQL Inserts from

the

drop-down list. In some situations

it can be faster than writing a INSERT+SELECT query for adding

data to another table. This generation is aware of auto increment IDs.

It can be also can be also presented

as

a batch of UPDATE statements, which

can help you to modify data.

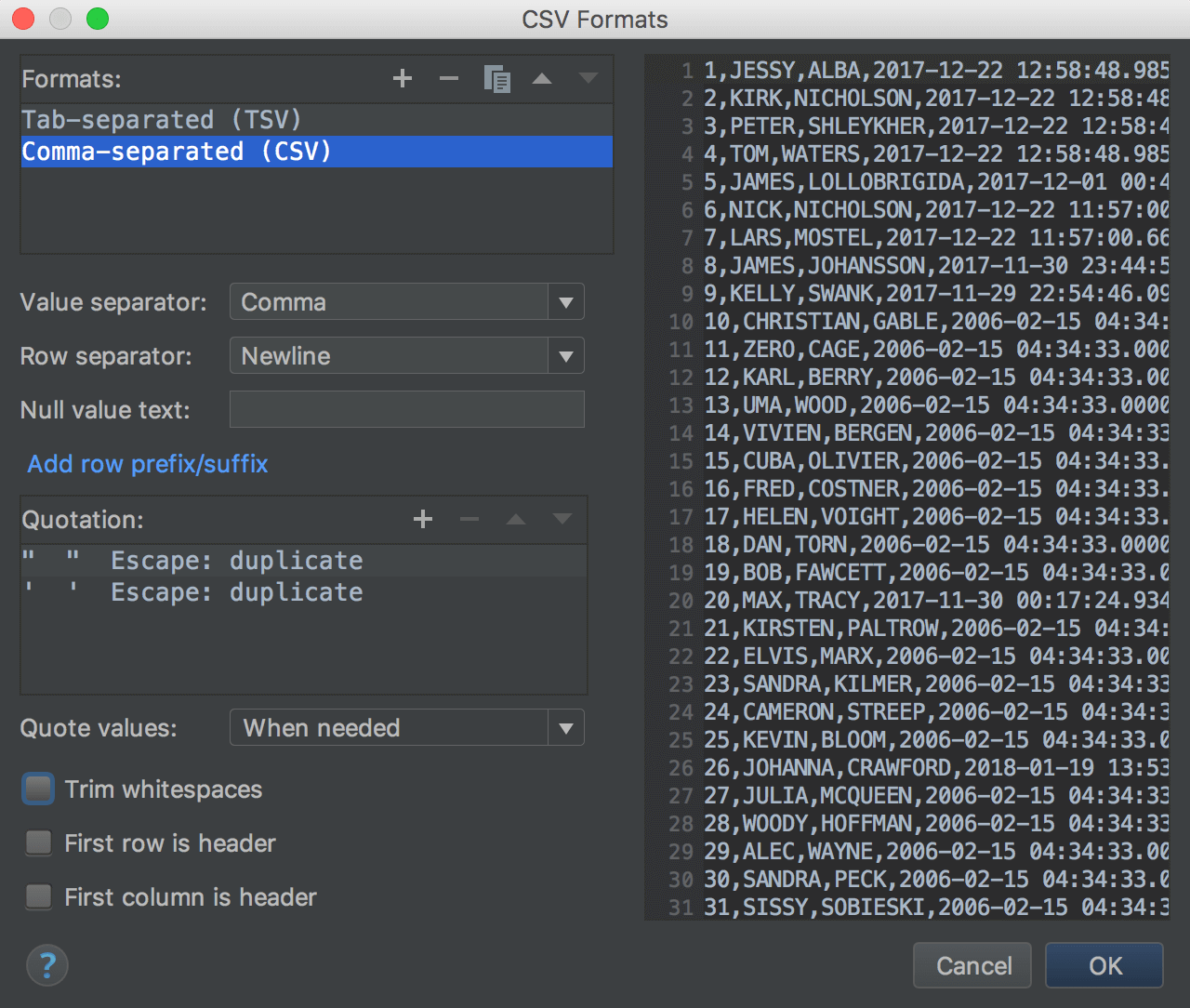

CSV, TSV

There are two pre-built formats in the list: Comma-Separated Values and Tab-Separated Values. You can create any custom format based on DSV. For instance, it can be Confluence Wiki Markup.

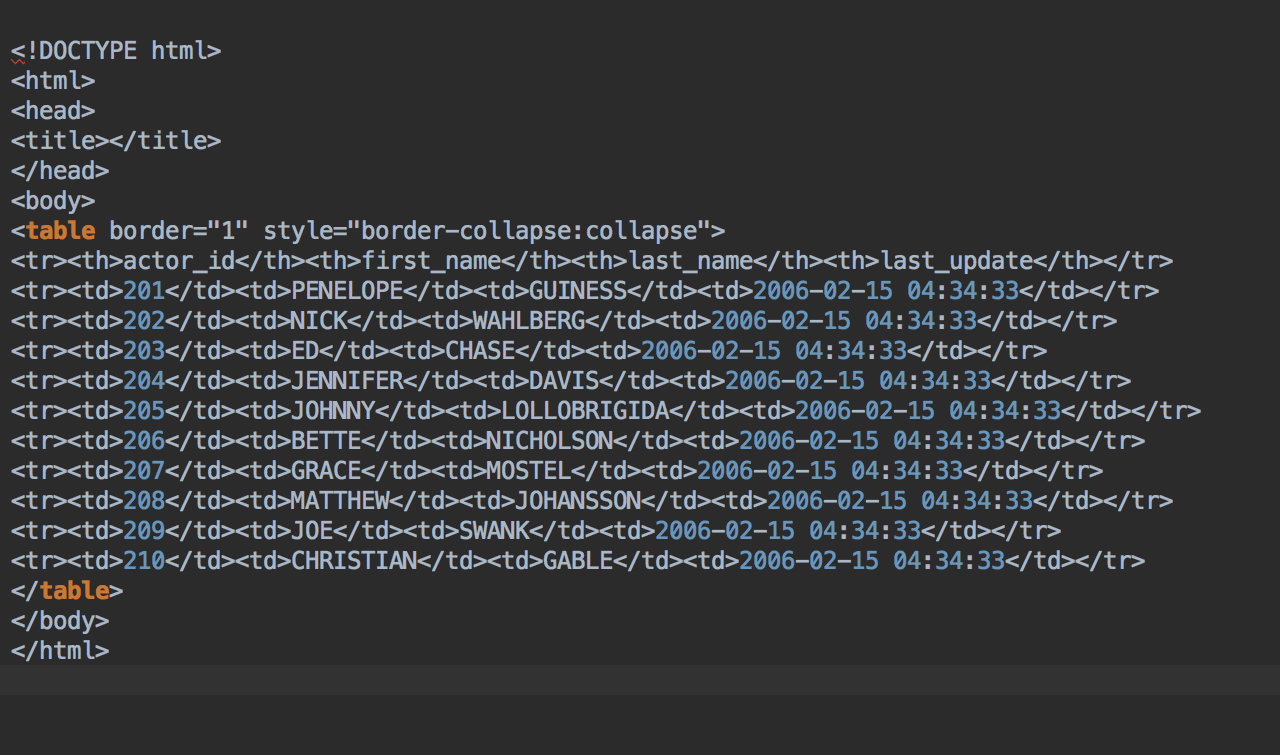

HTML/XML

There is one pre-built extractor you cannot change: HTML table. There are also scripted extractors: HTML-Groovy.html.groovy, XML-Groovy.xml.groovy and others. You can modify these extractors.

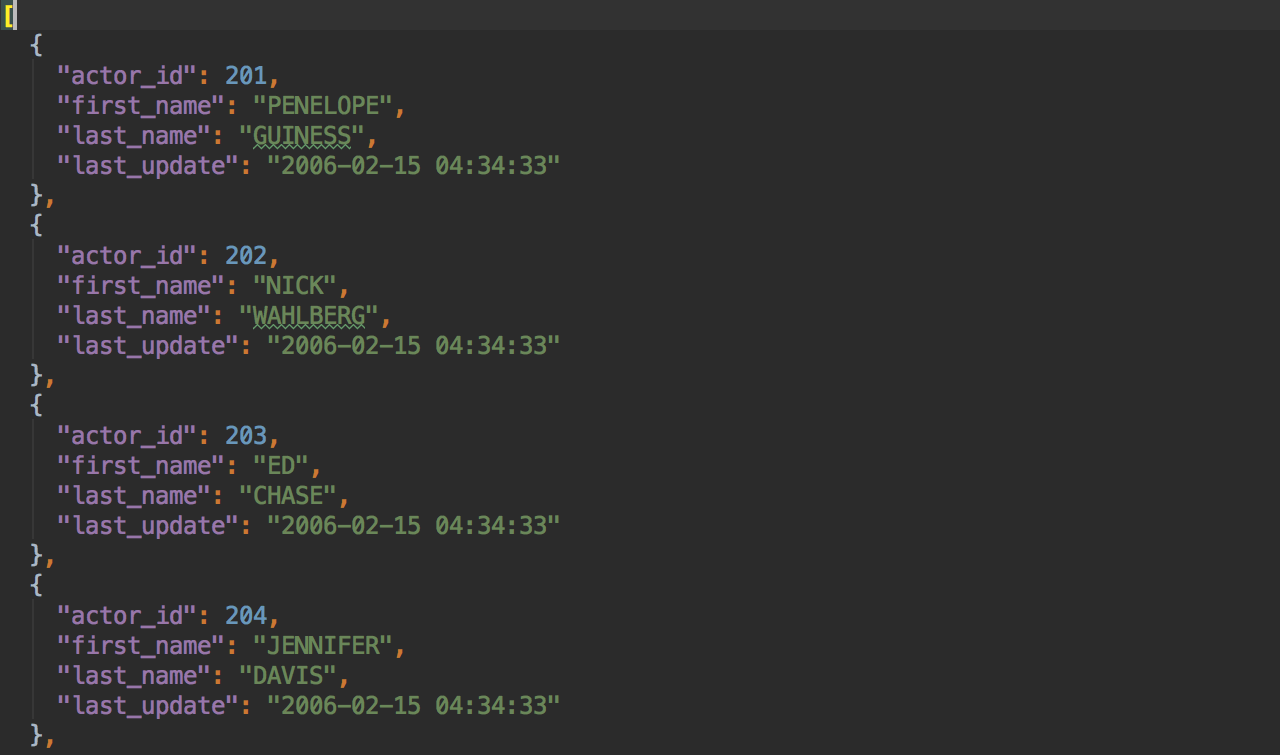

JSON

JSON-Groovy.json.groovy extractor will export your table or result-set to JSON.

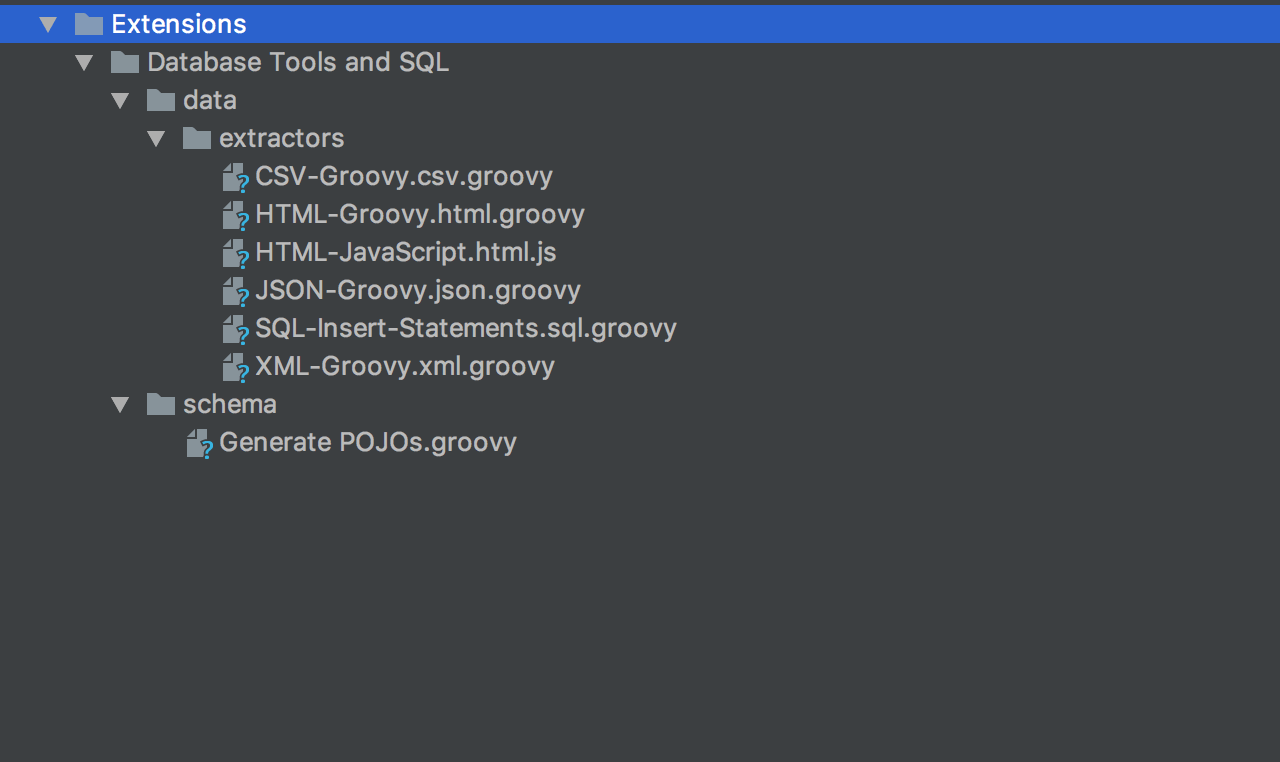

Custom formats

For more complicated cases, consider using scripting extractors. Several of them are already available such as CSV-Groovy.csv.groovy, HTML-Groove.html.groovy, and others. Select Go to scripts directory in the extractor menu to navigate to the folder where they are stored.

These scripts are written in Groovy, but they can also be written in JavaScript and are usually located in Scratches and Consoles/Extensions/Database Tools and SQL/data/extractors. Modify existing extractors or add your own extractors here.

To learn more about creating extractors, please, read this tutorial.

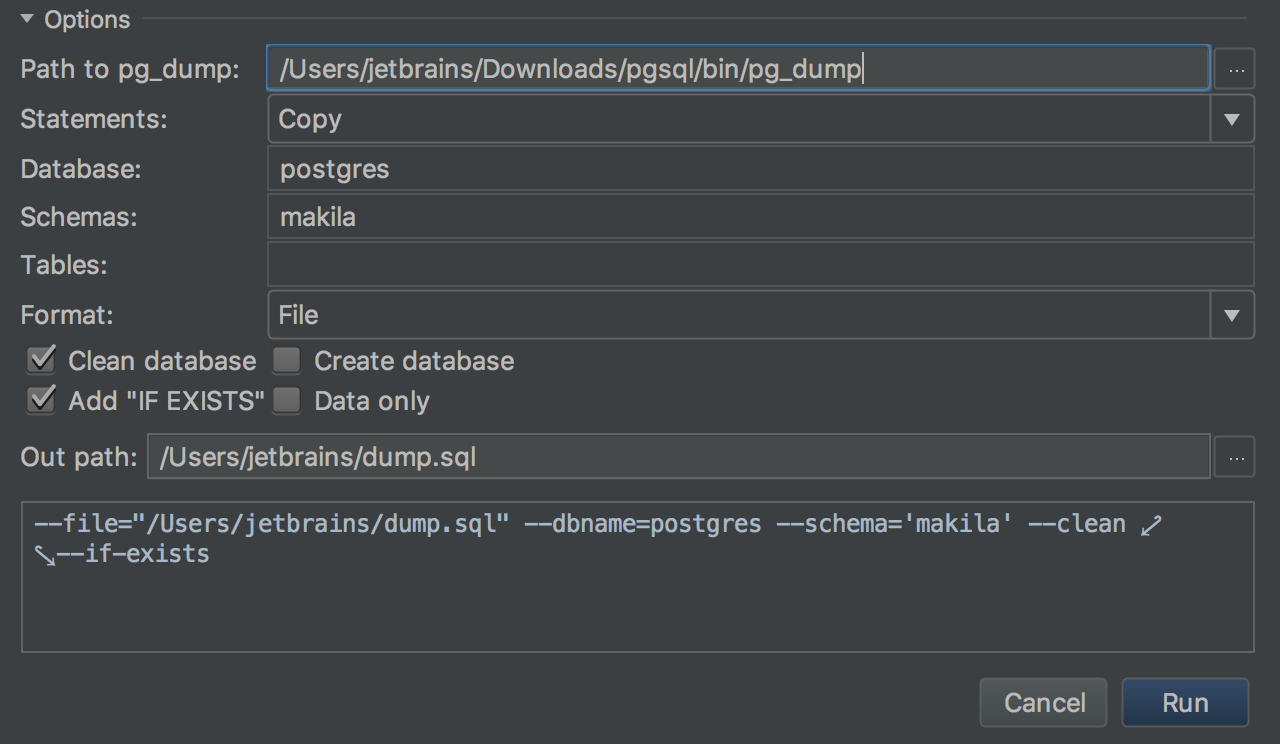

Dump/restore tools

We've integrated DataGrip with mysqldump and pg_dump. To dump the objects, use the Dump with… context menu option. Restore tools for MySQL and PostgreSQL also can be accessed from the context menu. In the case of PostgreSQL, the restore operation can be done with pg_dump or psql: there is a way to choose in the Restore dialog.