Understanding Continuous Delivery

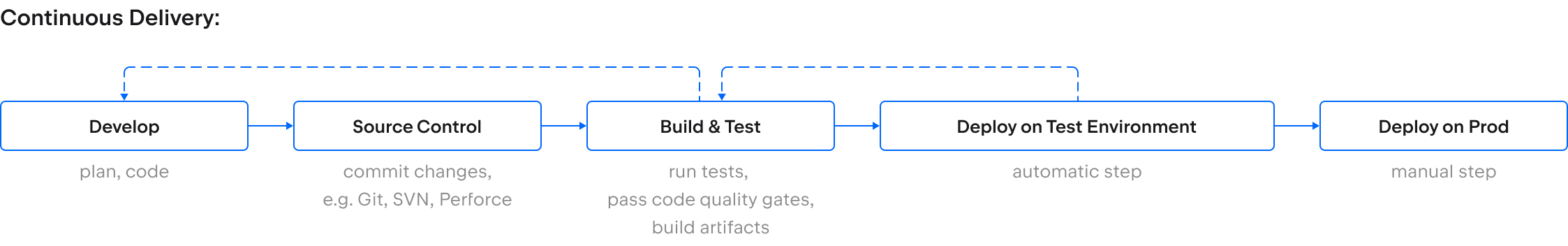

Continuous delivery, or CD, is the practice of automating the manual steps involved in preparing software for release to production.

In this article:

What is continuous delivery?

Continuous delivery (CD) builds on continuous integration (CI) by automating the steps involved in preparing software for release to production.

Each time you merge code changes into a designated branch, your continuous integration process runs a series of checks, including automated unit tests, and creates a build. Continuous delivery extends that process by automatically deploying each build to a series of testing and staging environments and running further automated tests.

These tests can include integration tests, UI tests, performance tests (such as load, soak, and stress tests), and end-to-end tests. Depending on your industry, you might also use continuous delivery to run security tests, accessibility tests, and manual user acceptance or exploratory testing. Once a build has passed each stage successfully, it’s considered ready for release to production.

As with continuous integration, the key to an effective continuous delivery practice is to automate as much of the process as possible. This includes providing feedback on each stage and alerting the team whenever the build fails to pass a stage so you can address the issue quickly.

Continuous delivery vs. continuous deployment

Both continuous delivery and continuous deployment involve automatically deploying builds to environments and running automated tests. As a result, the two terms are sometimes used interchangeably. However, there is a useful distinction between continuous delivery and continuous deployment.

With continuous deployment, a code change is automatically deployed to production every time it successfully passes each testing stage. In contrast, continuous delivery involves a manual step for the final stage – releasing your software to production.

While continuous deployment might sound like the ideal goal for any software development team, there are good reasons why many teams choose to practice continuous delivery.

Read more about continuous delivery vs. continuous deployment.

Why continuous delivery?

The final stage of a CI/CD process involves deploying your code changes to production. With continuous delivery, the decision to release to production is a manual step, even though the release process itself is automated.

Some teams practice continuous delivery as a stepping stone towards continuous deployment. Triggering the final deployment to production manually provides a safety net while you build confidence in your automated tests and checks. In this case, you might choose to practice continuous delivery for several months before making the jump to deploying every successful code change to production automatically.

However, deploying updates to your software several times a day or hour is not always the best choice. For versioned software – including mobile apps, APIs, embedded software, or desktop products – it often makes sense to group changes into larger releases. Users of installed products don’t expect to have to update their apps every few hours, while upgrading to a new API version can significantly impact your customers. Choosing between continuous delivery and deployment is about making the right decision for your business and your users.

Putting together a continuous delivery pipeline

Although the exact build steps, environments, and tests will depend on your product and organization, the following general principles will help you build your continuous delivery process:

- Start with CI: Implementing continuous integration before continuous delivery makes sense in most cases. Since CI primarily affects the development team, this provides an opportunity to get used to automating the build, test, and deployment process before involving outside stakeholders.

- Design your CD process for fast feedback: Discovering issues early makes your development process more efficient. Some stages can be run in parallel, such as automated UI tests on builds for different platforms. Equally, longer-running or resource-intensive performance tests can be delayed until the build successfully passes previous stages.

- Engage with stakeholders: DevOps – the philosophy behind CI/CD encourages development teams to break down silos and consider the entire software development process. When designing your CD pipeline, involve all those who participate in the current release process – from operations and security to marketing and support.

- Automate your tests: Automated tests are essential for continuous delivery as they provide reliable and fast validation that your software behaves as intended. If you don’t have automated tests, prioritize the areas with the greatest impact and build up test coverage incrementally.

- Reuse the same build artifact: To avoid introducing inconsistencies, deploy the same build artifact from the CI stage to each pre-production environment and production itself.

- Refresh test environments automatically: Ideally, test environments should be refreshed for each new build in your CI/CD process. Containers and an infrastructure-as-code approach make it easier to tear down and spin up new environments as needed.

- Consider stakeholders’ needs: The exception to the previous point is any staging environments used by support, sales, or marketing teams to familiarize themselves with new features. These teams may prefer environments to be updated on request to avoid disrupting work in progress.

- Embrace DevSecOps: The infosec or cybersecurity team is often seen as a barrier to frequent releases because of the time involved in running a security audit and the lengthy reports that follow. Take a DevSecOps approach and weave security requirements into your pipeline from the start.

- Consider manual testing requirements: Depending on your business, incorporate some manual exploratory testing to help identify unexpected failure modes. Instead of requiring manual tests of every code change, consider having optional steps or alternative pipelines that run weekly or monthly.

- Automate the release: Although continuous delivery means the decision to release to production is manual, the release itself should be automated. You should be able to deploy any good build to live with a single command.

The value of continuous delivery

Continuous delivery enables teams to release software faster and more frequently while reducing the number of bugs that make it into production. The key to achieving this is ensuring that your code is always ready to release by continuously testing your changes and addressing issues as soon as they are discovered.

Beyond that, continuous delivery offers multiple additional benefits:

- Releasing more frequently means you accelerate your time to market, getting new features, fixes, and enhancements to users faster.

- The continuous testing process means that you get rapid feedback on your work. If a recent change introduces a vulnerability, causes your app to hang in certain circumstances, or causes an API call to fail, you find out about it much sooner than you would with a traditional release testing process.

- Discovering issues earlier makes for a more efficient development process. Not only is the change still relatively fresh in your mind, but there is also less risk that other code changes that rely on the faulty code have been added.

- Automating repetitive build, test, and release tasks ensures they are performed consistently and reduces the risk of mistakes while freeing up team members to focus on adding value.

- Investing in automated tests helps you test your software more thoroughly. This might include testing consistently across multiple platforms, checking you meet accessibility requirements, or baselining the performance of your product or service.

- Automatically refreshing environments and deploying builds helps you use infrastructure more efficiently, whether that’s on-premise servers or a cloud-hosted build farm.

- Automating deployments to staging sites ensures product, marketing, and support teams can preview new features without additional manual effort from development or operations teams.

- Continuous delivery makes your release process robust and repeatable while giving you control over exactly when you release. With a CD process in place, you can choose to deliver small improvements weekly, daily, or even hourly.

The challenges of continuous delivery

Implementing a continuous delivery process can come with a few challenges:

- Cross-team cooperation: You’ll likely need cooperation from multiple parts of your organization, such as operations, infrastructure, and security teams. While breaking down silos can be challenging in the short term, it leads to better collaboration and effectiveness in the long term.

- Time investment: Automating your build, test, and release processes takes time. However, taking an iterative approach and building up your process over time makes this more manageable. Collecting metrics, such as defect rates and build times, and comparing them to manual procedures is an effective way to demonstrate the return on investment to stakeholders.

- Scaling challenges: As you scale your continuous delivery process, you will likely want to start running multiple builds and tests in parallel. At this point, the number of available servers can become a limiting factor. After optimizing the performance of your pipeline, consider moving to cloud-hosted infrastructure to scale your build farm as needed.

Continuous delivery best practices

Building a continuous delivery process might seem daunting, but when done right, it can dramatically speed up software releases while minimizing bugs.

Central to effective implementation of continuous delivery is adopting the DevOps mindset. Rather than viewing the software development process as a one-way conveyor belt – with requirements, code, and reports handed off from one team to the next – DevOps champions collaboration and rapid feedback from short, iterative cycles.

Changing your “definition of done” can help you adopt this mentality. Instead of considering the task complete when you hand your code over for testing, your new feature or code change is only done once it’s released live. If an issue is found at any stage in the pipeline, communicating that feedback promptly and collaborating on a fix makes for a quicker resolution than lengthy reports that must go through a change board for approval. That’s what continuous delivery is all about.

For more tips to help you get started with continuous delivery, read our guide to CI/CD best practices.

Conclusion

Continuous delivery makes it easier and quicker to release software, enabling frequent deployments to production. Instead of a large quarterly or annual release, smaller updates are delivered frequently. This not only means users get new functionality and bug fixes sooner, but it also allows you to see how your software is used in the wild and adjust plans accordingly.

While some organizations prefer to maintain control over the final step in the release process, for others, the logical conclusion of a CI/CD pipeline is to automate the release to live, using a practice known as continuous deployment. You can learn more about this in the next section of our CI/CD guide.

How TeamCity can help

TeamCity is a CI/CD platform with extensive support for a wide range of build tools, testing frameworks, containers, and cloud infrastructure providers. Whether you want to host your build machines on-site, in the cloud, or use a combination of both, TeamCity will coordinate build tasks for maximum efficiency.

TeamCity’s customizable pipeline logic means you can choose when to run processes in parallel – such as tests on different platforms – and when to require successful completion before moving to the next stage. Configurable notifications provide you with the information you need wherever you’re working, helping you avoid unnecessary interruptions. Finally, detailed results help you ensure your path to production remains clear.