Run tests

Run tests directly in a file or folder

If your tests do not require any specific actions before start, and you do not want to configure additional options, you can run them by using the following options:

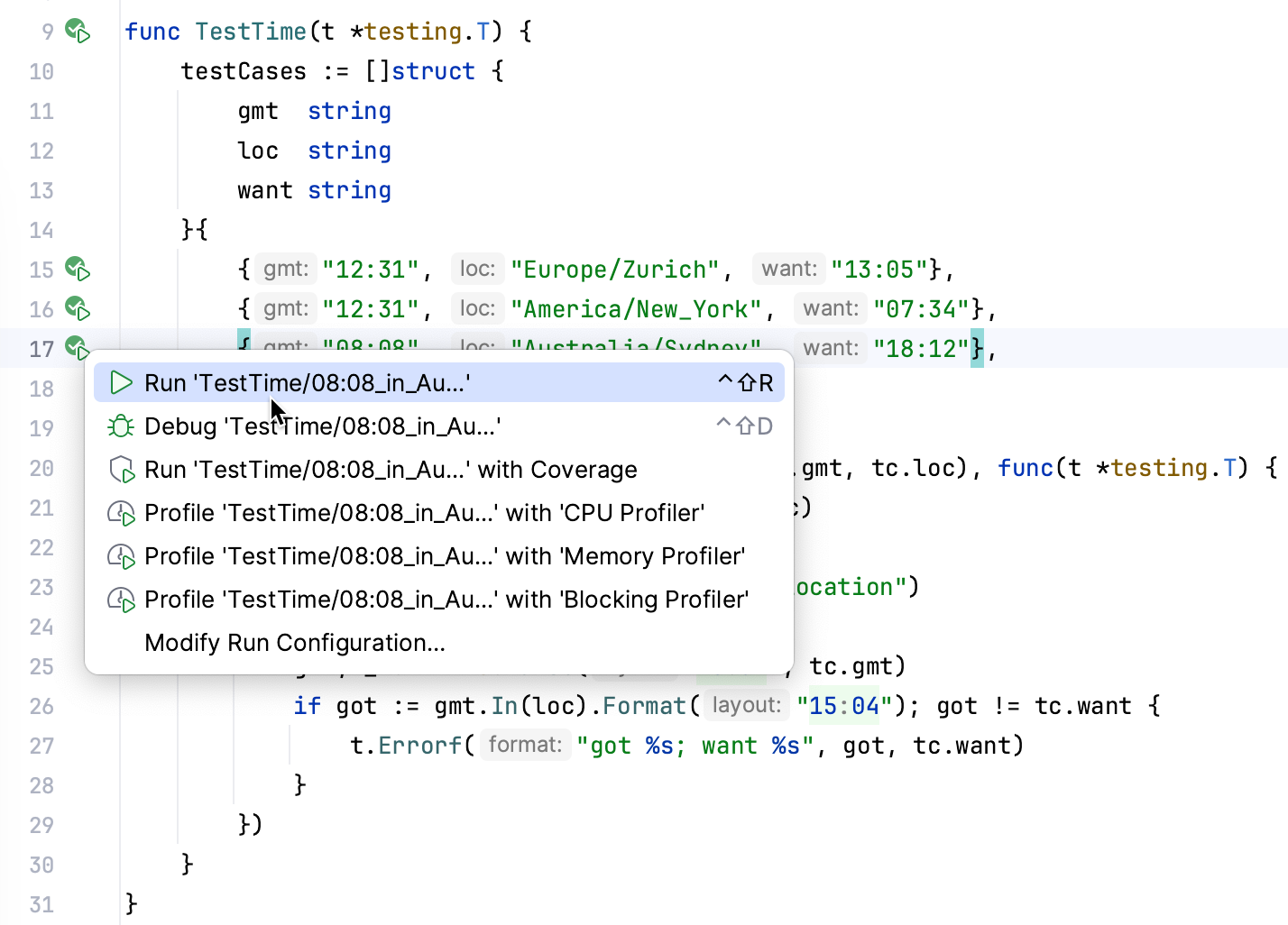

Place the caret at the test file to run all tests in that file, or at the test method, and press Ctrl+Shift+F10. Alternatively, click the

gutter icon next to the test method and select Run '<test name>' from the list.

The gutter icon changes depending on the state of your test:

The

gutter icon marks a set of tests. Use this icon to run all the tests in the file.

The

gutter icon marks new tests.

The

gutter icon marks successful tests.

The

gutter icon marks failed tests.

To run all tests in a folder, select this folder in the Project tool window and press Ctrl+Shift+F10 or select Run Tests in 'folder' from the context menu.

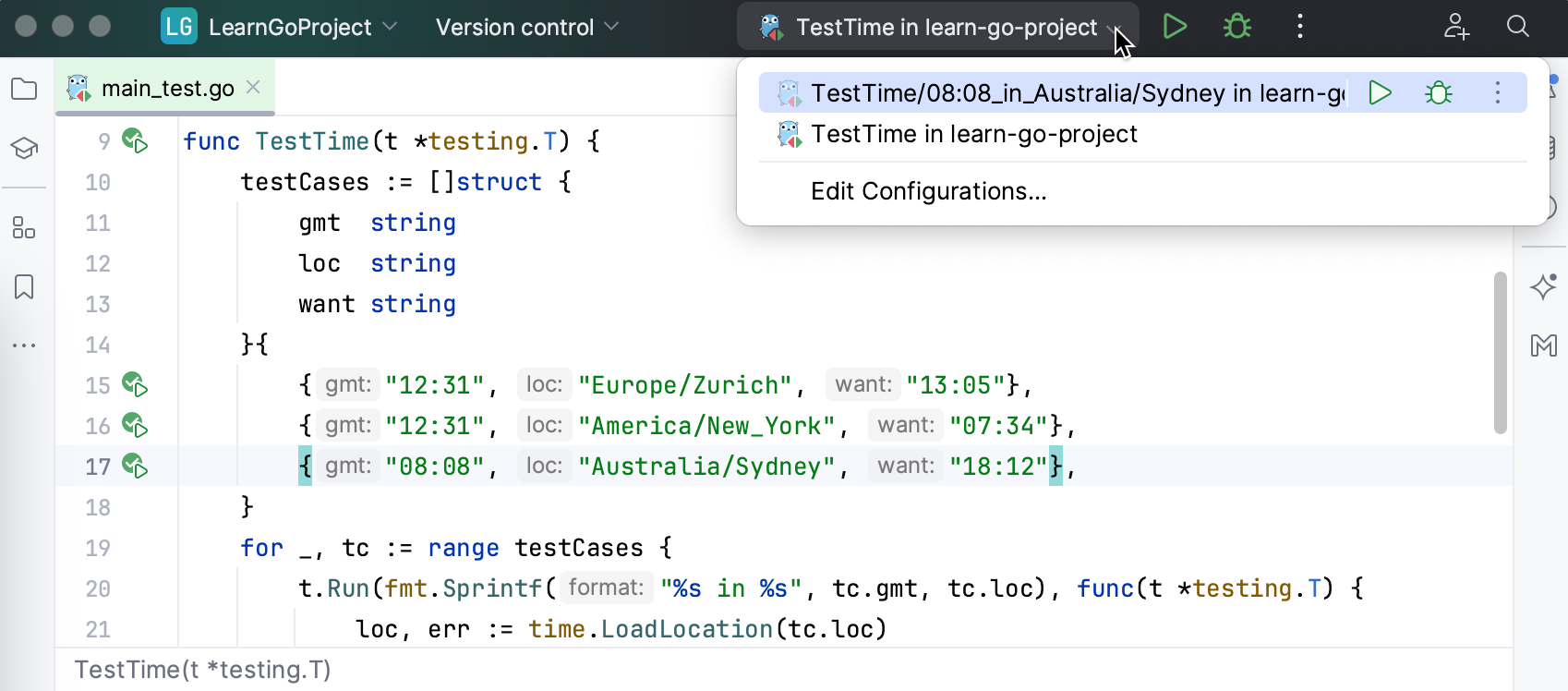

Run tests using the Run widget

When you run a test, GoLand creates a temporary run configuration. You can save temporary run configurations, change their settings, share them with other members of your team. For more information, refer to Run/debug configurations.

Create a new run configuration or save a temporary one.

Use the Run widget on the main toolbar to select the configuration you want to run.

Click

or press Shift+F10.

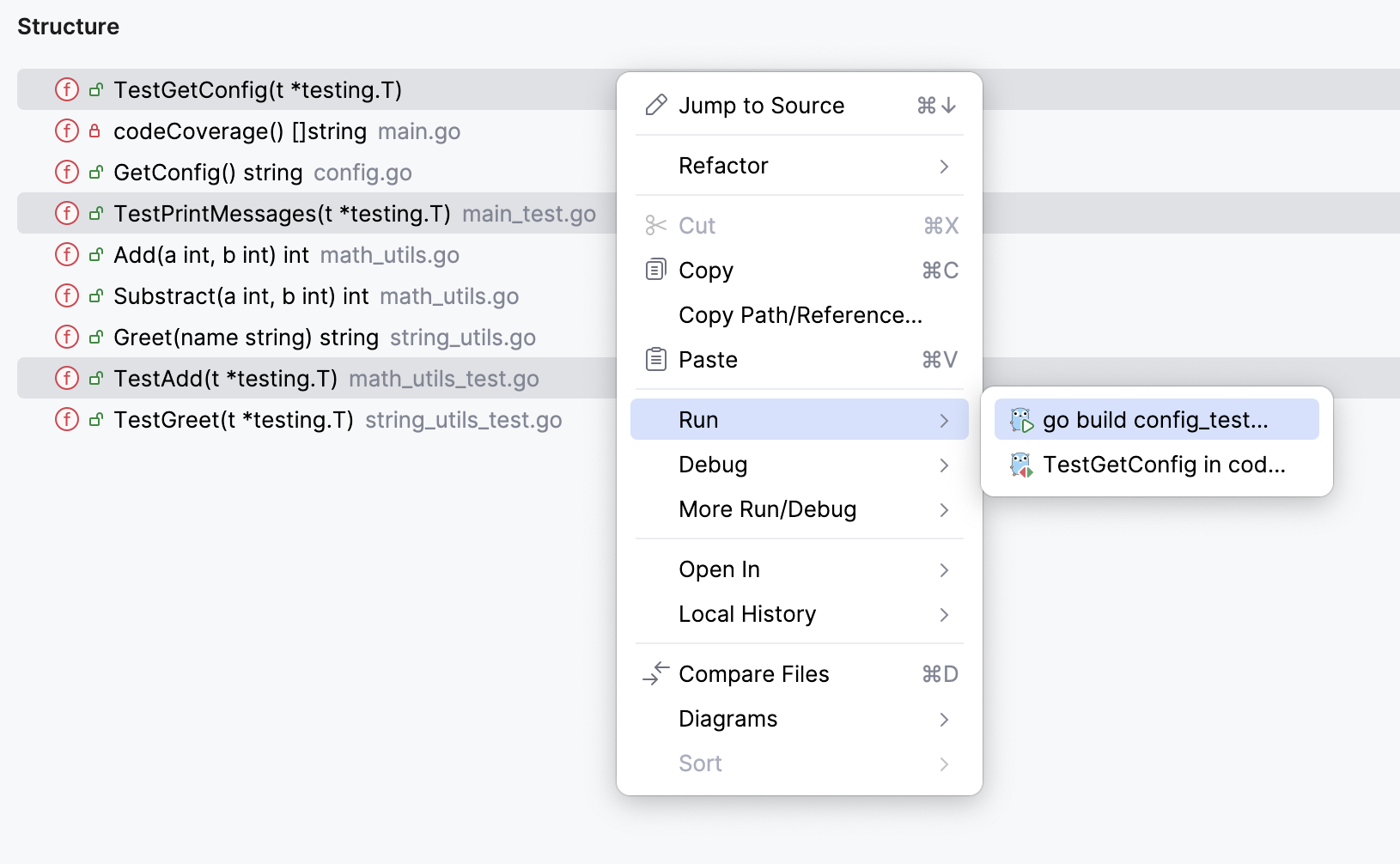

Run tests from Structure

In the Structure tool window, you can select one or several test methods to run. In this case, the IDE also creates a temporary run configuration with these methods that you can save and edit.

In the Project tool window, double-click the Go test file (

_test.go).Navigate to .

In the Structure tool window, right-click one or several test methods and select

Run 'method name' (Ctrl+Shift+F10).

Right-click a method in the Structure tool window and select:

Add to temp suite: <Configuration_name> if there is only one configuration with the Pattern test scope.

Add to JUnit Pattern Suite (JUnit) / Add to Temp Suite (TestNG) if there are several configurations with the Pattern test scope. In this case, a popup appears in which you can select the target configuration.

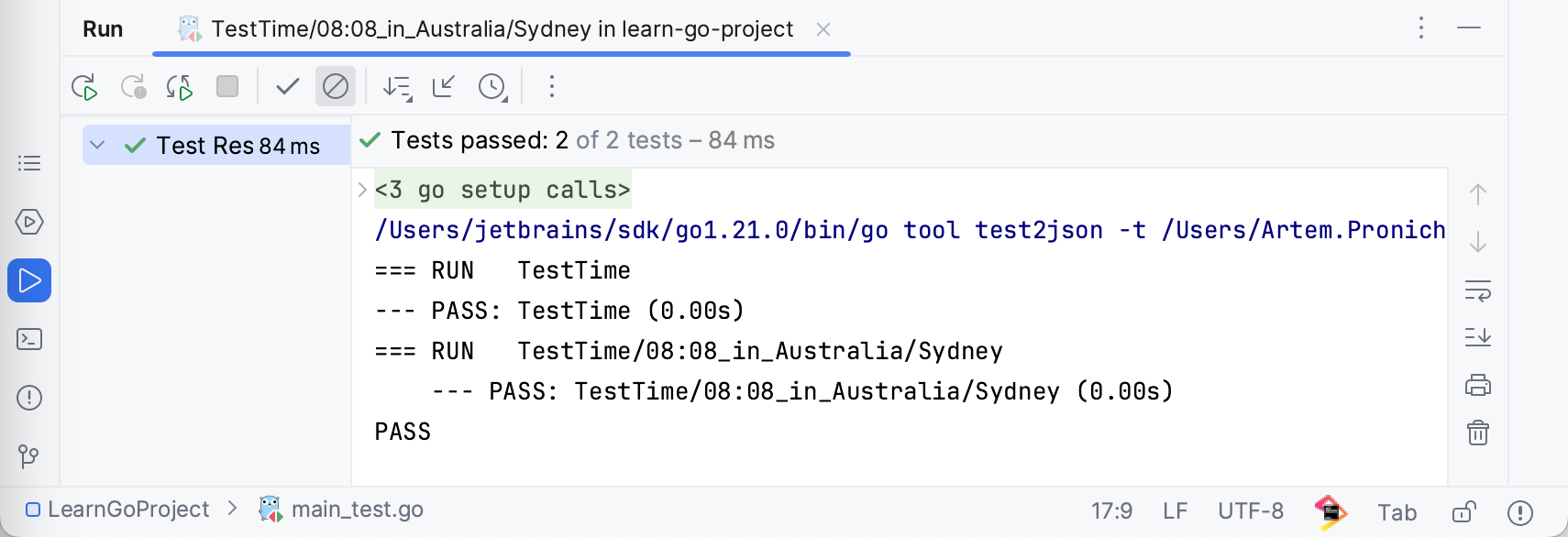

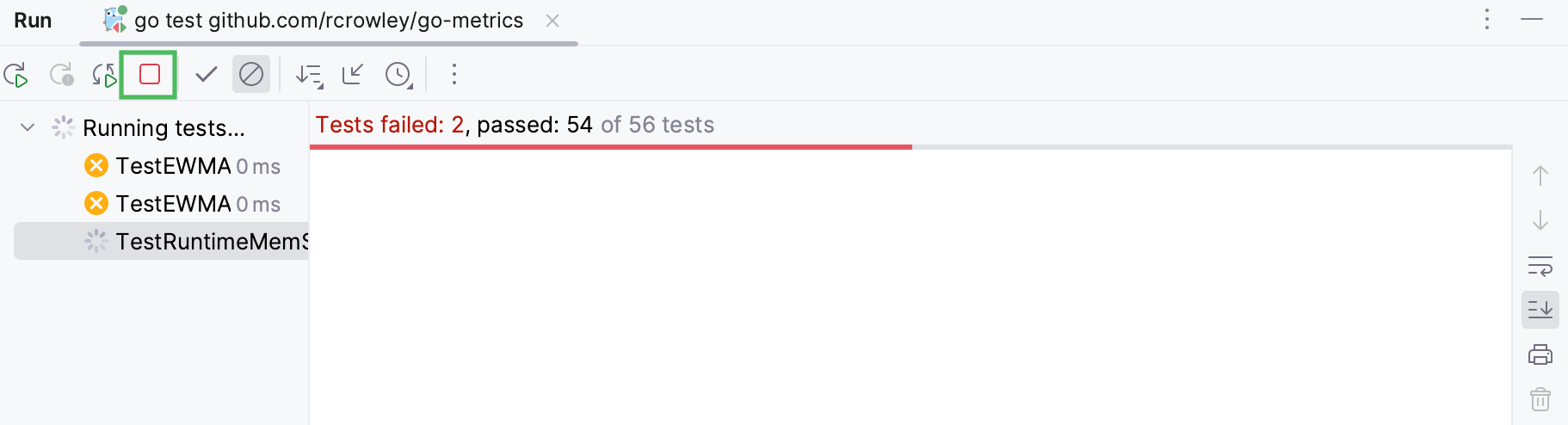

After GoLand finishes running your tests, it shows the results in the Run tool window on the tab for that run configuration. For more information about analyzing test results, refer to Explore test results.

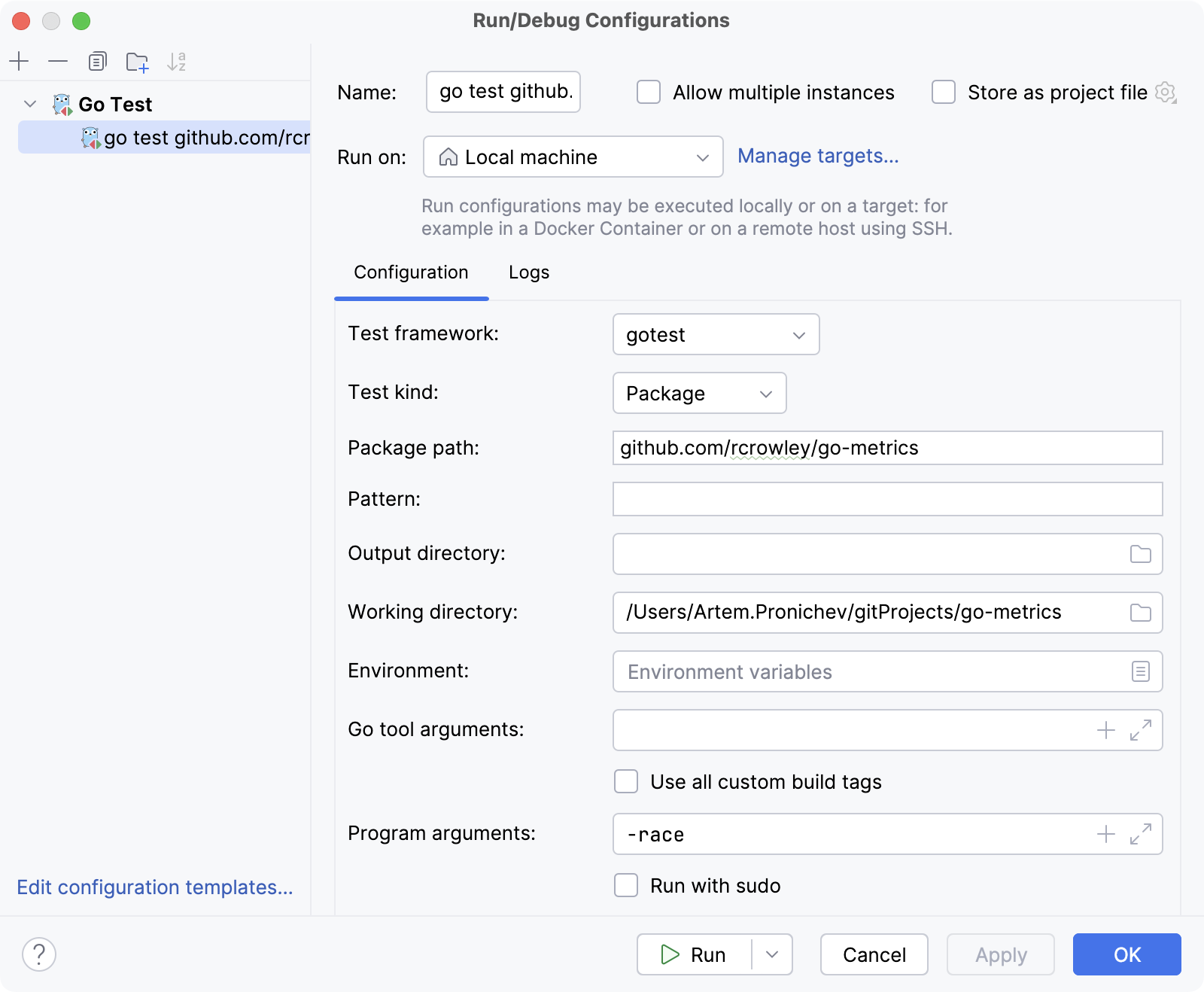

Run tests with test flags

You can run tests with test flags like -race, -failfast, -short, and others. Check other flags in the Go documentation at pkg.go.dev.

Navigate to .

Click the run/debug configuration that you use to run your application or your tests. In the Program argument field, specify a flag that you plan to use:

-race: enables data race detection. Supported only onlinux/amd64,freebsd/amd64,darwin/amd64,windows/amd64,linux/ppc64leandlinux/arm64(only for 48-bit VMA).-test.failfast: stops new tests after the first test failure.-test.short: shortens run time of long-running tests.-test.benchmem: prints memory allocation statistics for benchmarks.

Run all tests in a project

In the main menu, select .

Click the Add New Configuration icon (

) and select Go Test.

From the Test kind drop-down list, select one of the following options:

Package: to run all tests for the selected package in the Package path field.

Directory: to run all tests for the selected package in the Directory field.

Click Run.

Fuzz testing

Fuzz testing is a way to automate your tests by continuously submitting various input. The input is generated according to the sample data that you provided in f.Add("mySampleData").

The f.Add() which accepts the following data types: string, []byte, rune, int, int8, int16, int32, int64, uint, uint8, unit16, uint32, uint64, float32, float64, bool.

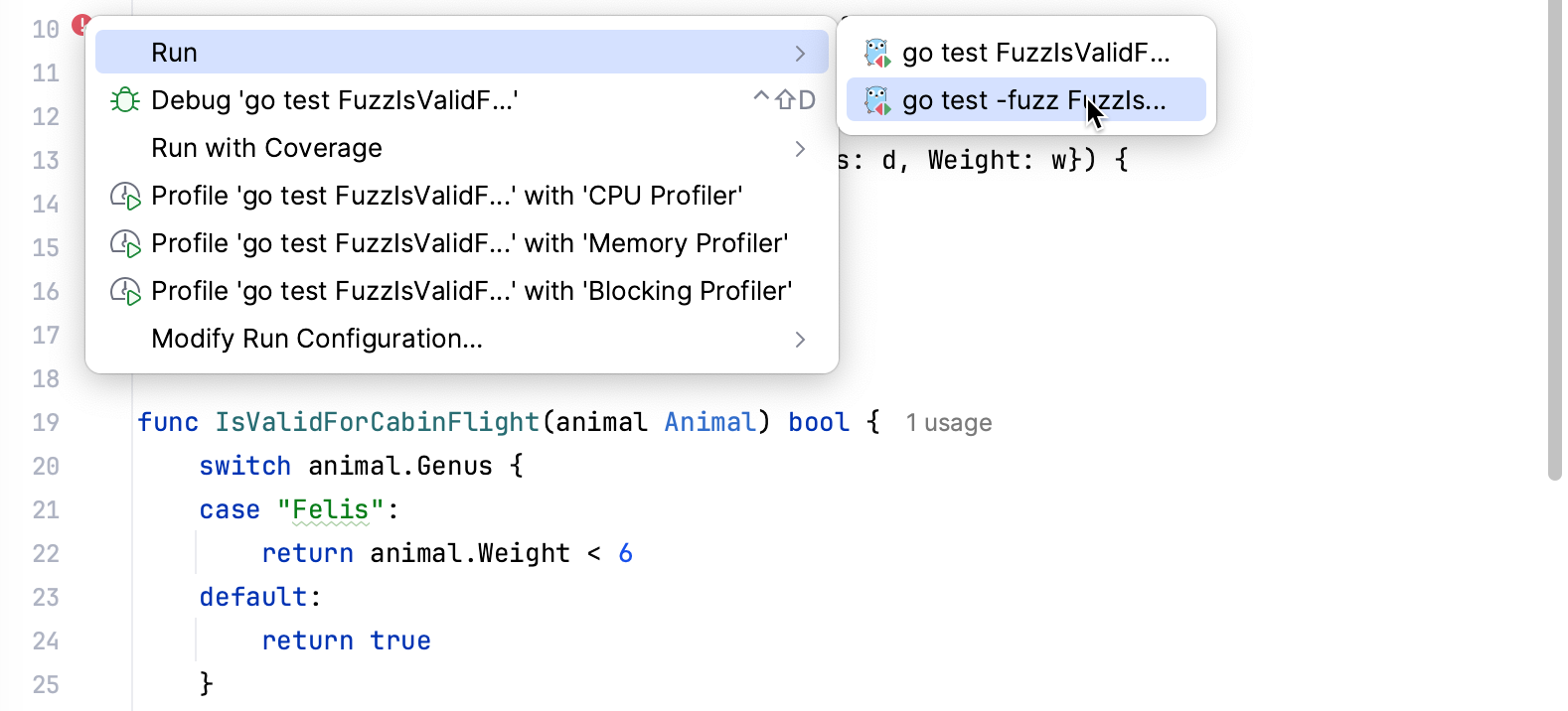

Running fuzz testing

Click the gutter Run Test icon, select Run, and then select the fuzz testing configuration (for example, go test -fuzz FuzzTest).

If the testing fails, you can click the link to the testdata directory to see what input has failed the test.

To run go test with the failing seed corpus entry, open the file from the testdata directory, click the Run Fuzzing icon in the gutter, and select the necessary configuration.

Debugging fuzz tests

Create a breakpoint by clicking the gutter on the necessary line.

Alternatively, click the line where you want to create a breakpoint and press Ctrl+F8.

Click .

In the Debug popup window, select the desired run/debug configuration.

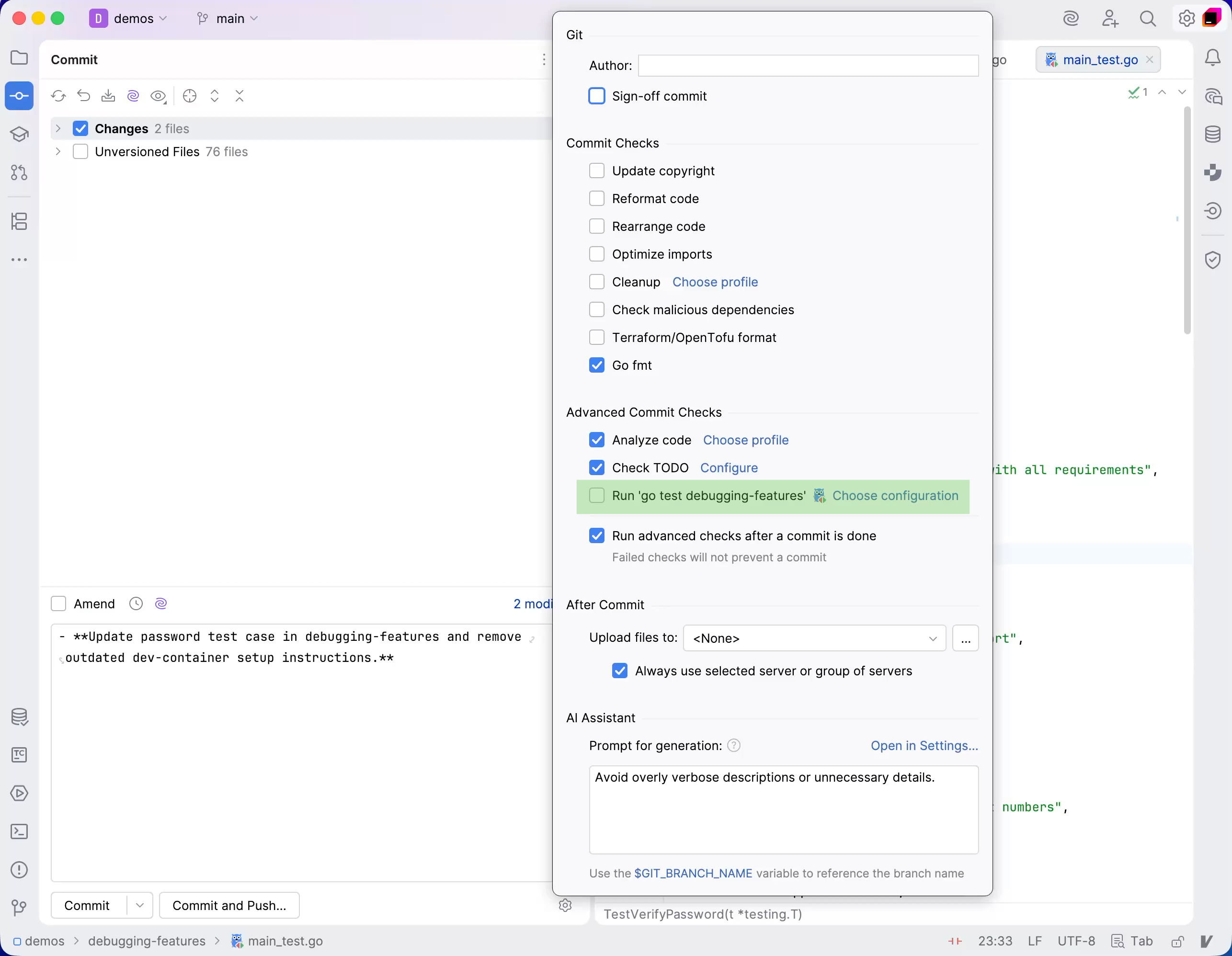

Run tests after commit

When you want to check that your changes would not break the code before pushing them, you can do that by running tests as commit checks.

Set up test configuration

Press Alt+0 to open the Commit tool window and click Show Commit Options

.

Under the Advanced Commit Checks menu, next to the Run Configuration option, click Choose configuration and select which configuration you want to run.

After you have set up the test configuration, the specified tests will run every time you make a commit.

Stop tests

Use the following options on the Run toolbar of the tab for the run configuration:

Click

or press Ctrl+F2 to terminate the process immediately.

Rerun tests

Rerun a single test

Right-click a test on the tab for the run configuration in the Run tool window and select Run 'test name'.

Rerun all tests in a session

Click

on the Run toolbar or press Ctrl+F5 to rerun all tests in a session.

Rerun failed tests

Click

on the Run toolbar to rerun only failed tests.

Hold Shift and click

to choose whether you want to Run the failed tests again or Debug them.

You can configure the IDE to trigger tests that were ignored or not started during the previous test run together with failed tests. Click

on the Run toolbar and enable the Include Non-Started Tests into Rerun Failed option.

Rerun tests automatically

In GoLand, you can enable the autotest-like runner: any test in the current run configuration restarts automatically after you change the related source code.

Click

Rerun Automatically on the test results toolbar to enable the autotest-like runner.

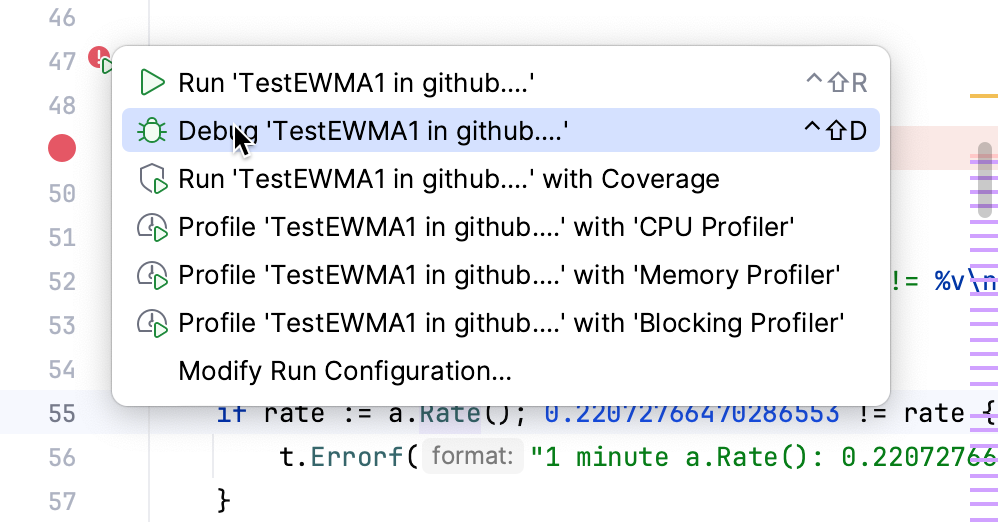

Debug failed tests

If you do not know why a test fails, you can debug it.

In the editor, click the gutter on the line where you want to set a breakpoint.

There are different types of breakpoints that you can use depending on where you want to suspend the program. For more information, refer to Breakpoints.

Right-click the

gutter icon next to the failed test and select Debug 'test name'.

The test will rerun in debug mode. After that, the test will be suspended, allowing you to examine its current state.

You can step through the test to analyze its execution in detail.

Productivity tips

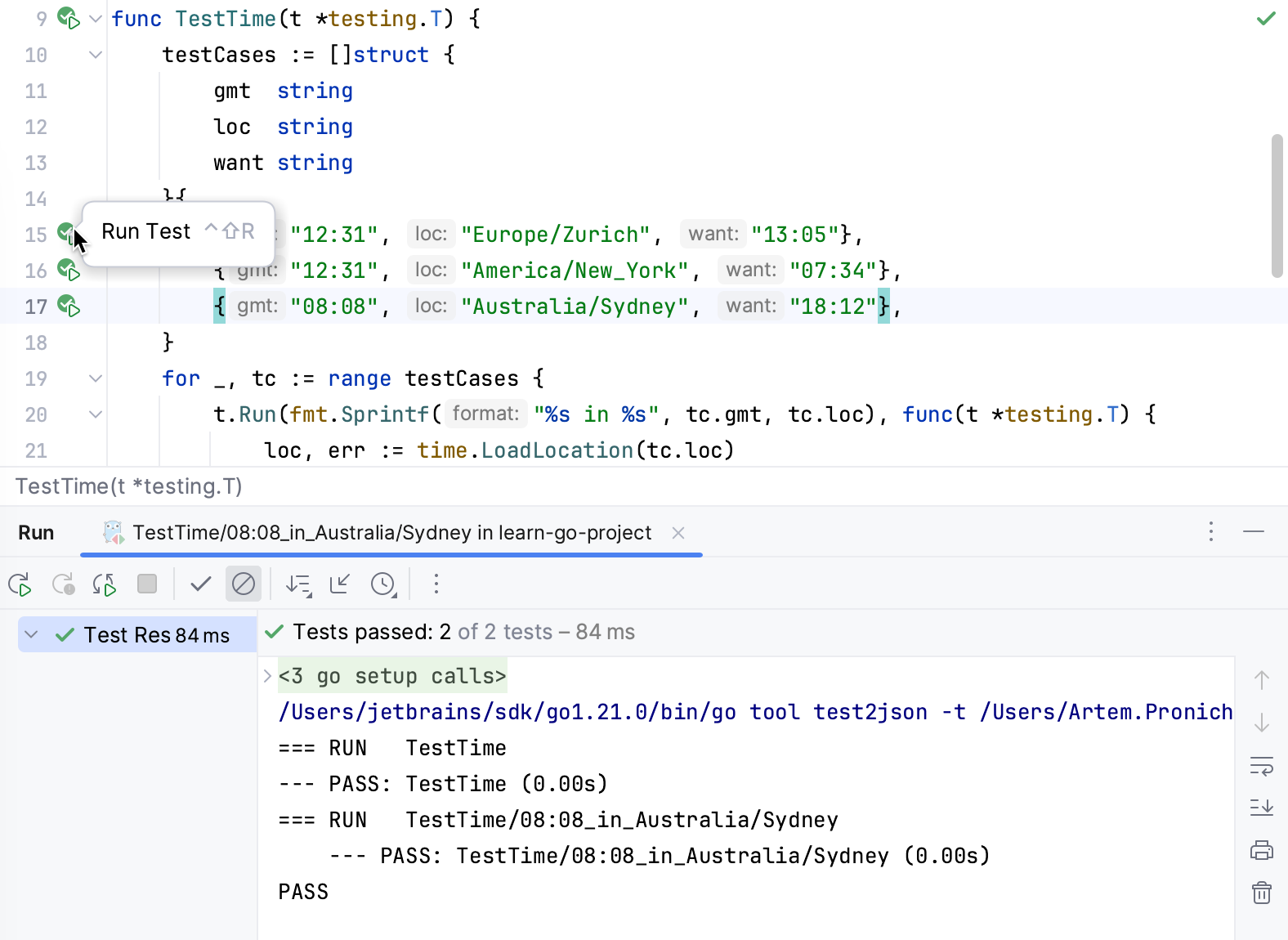

Run individual table tests

You can run individual table tests by using the Run icon (

) in the gutter. Also, you can navigate to an individual table test from the Run tool window.

Current support of table tests has following limitations:

The test data variable must be a slice, an array, or a map. It must be defined in the same function as the

t.Runcall and must not be used after initialization (except for therangeclause in theforloop).The individual test data entry must be a struct literal. Loop variables used in a subtest name expression must not be used before the

t.Runcall.A subtest name expression can be test data string field, a concatenation of test data string fields, or a

fmt.Sprintf()call with%sand%dverbs.For example, in the following code snippet,

fmt.Sprintf("%s in %s", tc.gmt, tc.loc)is a subtest name expression.for _, tc := range testCases { t.Run(fmt.Sprintf("%s in %s", tc.gmt, tc.loc), func(t *testing.T) { loc, err := time.LoadLocation(tc.loc) if err != nil { t.Fatal("could not load location") } gmt, _ := time.Parse("15:04", tc.gmt) if got := gmt.In(loc).Format("15:04"); got != tc.want { t.Errorf("got %s; want %s", got, tc.want) } }) }