Run tasks

Chat is the main entry point for interacting with LLMs and agents supported by JetBrains Air. You can ask questions about your project and work with agents to plan and execute tasks.

The workflow includes these steps:

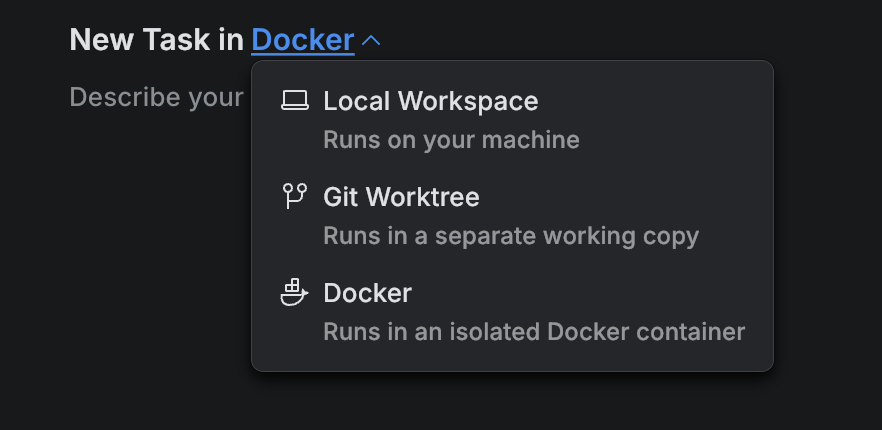

Select the task run environment

Select where the task runs. You can run tasks in your local workspace, in a separate Git worktree, or in a Docker container.

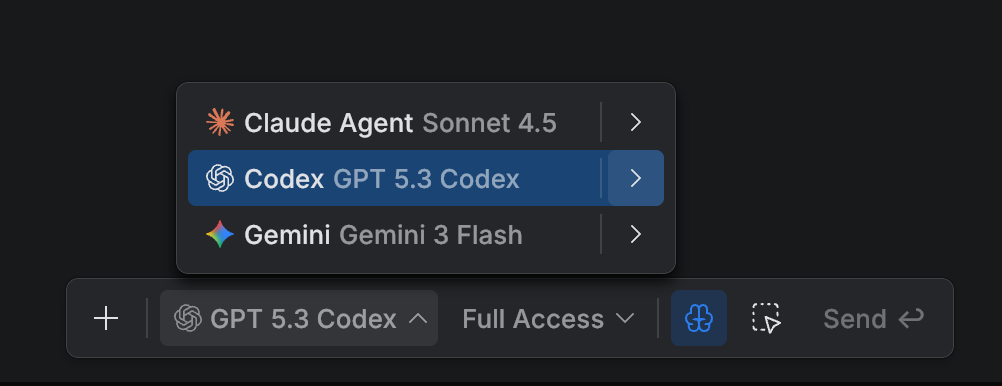

Select an agent

Choose the agent and model to process your request. For more information, refer to Supported agents.

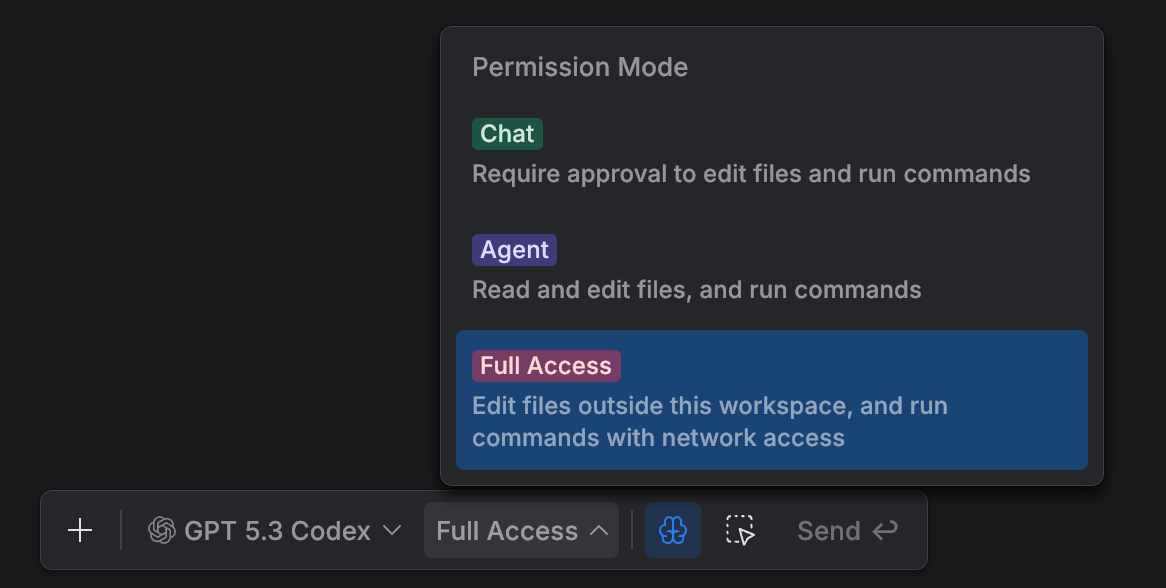

Select a permission mode

Define when the agent can edit files and run commands, and when it must ask for approval. For more information, refer to Permission modes.

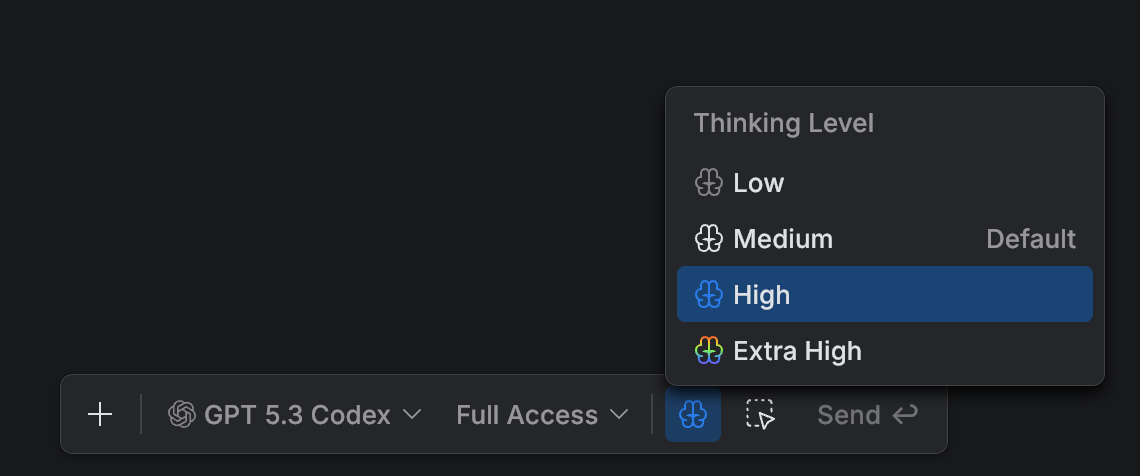

Select a thinking mode

For Gemini CLI and OpenAI Codex, you can set the reasoning level. For more information, refer to Model reasoning, verbosity, and limits or Gemini thinking.

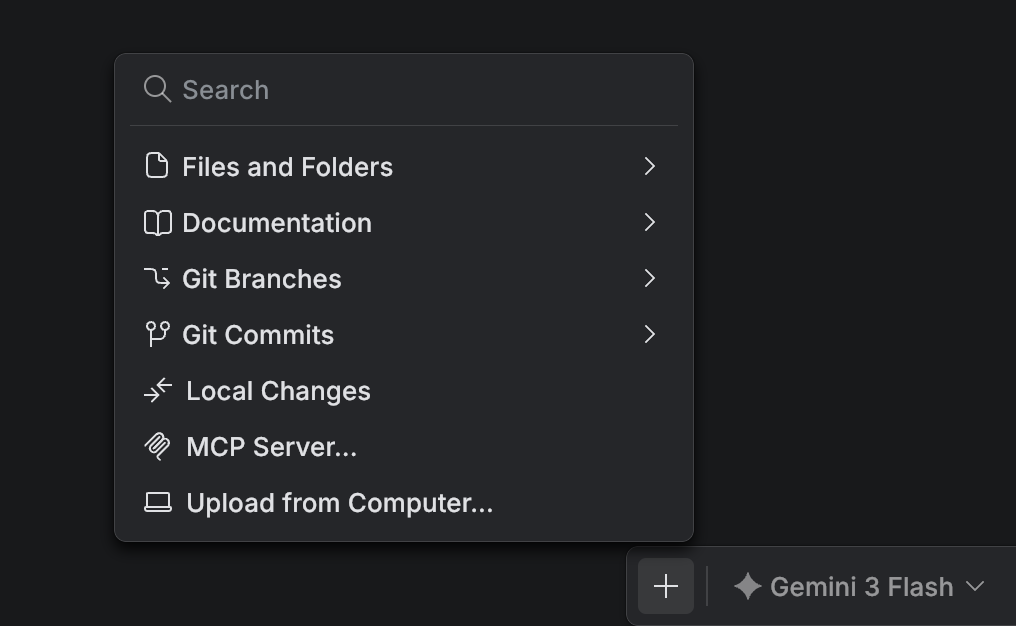

Add context to your request

Provide information relevant to your request. Add files, folders, images, symbols, or other elements that can serve as context.

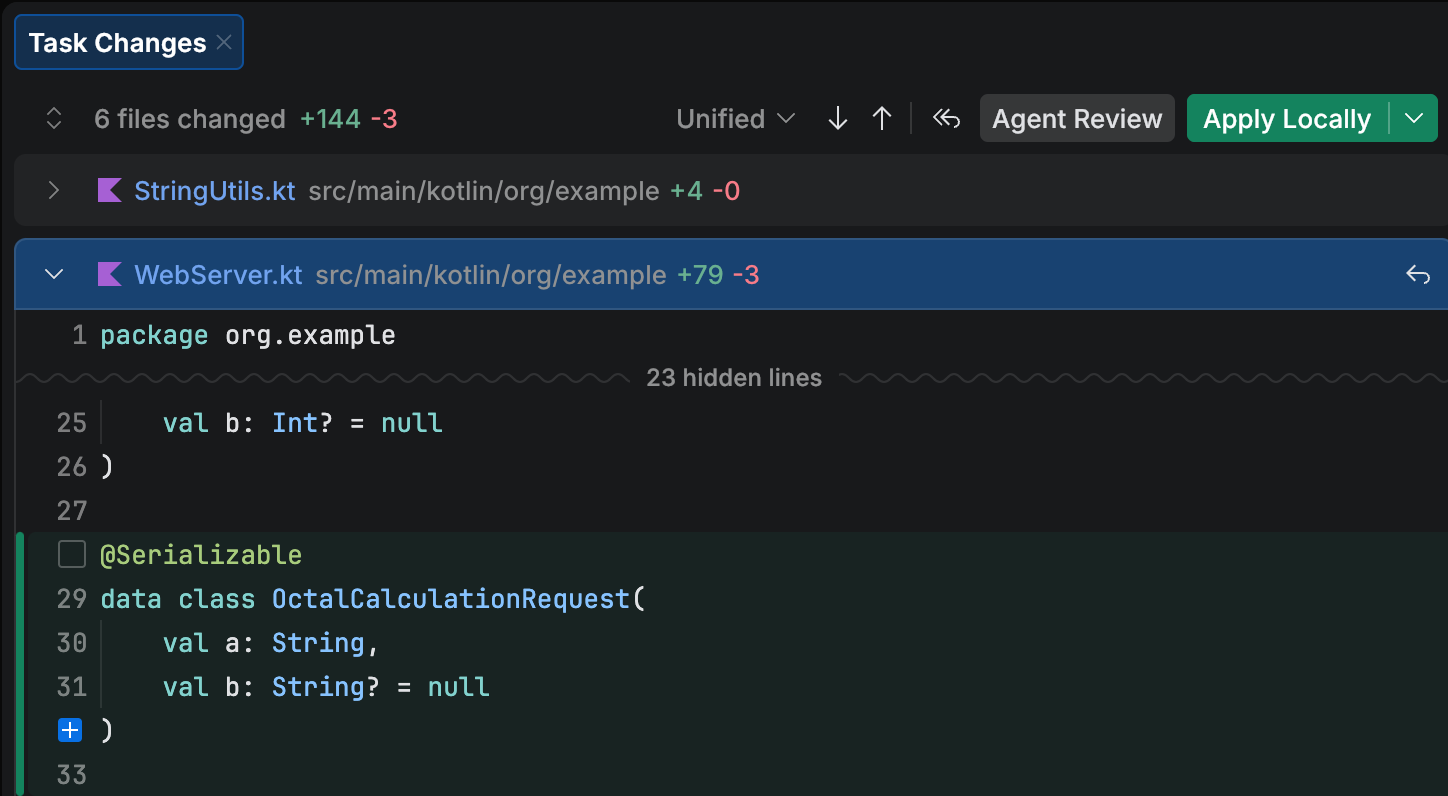

Process the response

JetBrains Air can answer questions, generate code and terminal commands, and edit files. You can review the results and process the proposed changes individually.