Google Test

Google Test and Google Mock are a pair of powerful unit testing tools: the framework is portable, it includes a rich set of fatal and non-fatal assertions, provides instruments for creating fixtures and test groups, gives informative messages, and exports the results in XML. Probably the only drawback is a need to build gtest/gmock in your project in order to use it.

Google Test basics

If you are not familiar with Google Test, you can find a description of its main concepts below:

Assertions

In Google Test, the statements that check whether a condition is true are referred to as assertions. Non-fatal assertions have the EXPECT_ prefix in their names, and assertions that cause fatal failure and abort the execution are named starting with ASSERT_. For example:

Some of the asserts available in Google Test are listed below (in this table, ASSERT_ is given as an example and can be switched with EXPECT_):

Logical |

|

General comparison |

|

Float point comparison |

|

String comparison |

|

Exception checking |

|

Also, Google Test supports predicate assertions which help make output messages more informative. For example, instead of EXPECT_EQ(a, b) you can use a predicate function that checks a and b for equivalency and returns a boolean result. In case of failure, the assertion will print values of the function arguments:

Predicate assertion example

bool IsEq(int a, int b){

if (a==b) return true;

else return false;

}

TEST(BasicChecks, TestEq) {

int a = 0;

int b = 1;

EXPECT_EQ(a, b);

EXPECT_PRED2(IsEq, a, b);

} | Output

Failure

Value of: b

Actual: 1

Expected: a

Which is: 0

Failure

IsEq(a, b) evaluates to false, where

a evaluates to 0

b evaluates to 1

|

In EXPECT_PRED2 above, predN is a predicate function with N arguments. Google Test currently supports predicate assertions of arity up to 5.

Fixtures

Google tests that share common objects or subroutines can be grouped into fixtures. Here is how a generalized fixture looks like:

When used for a fixture, a TEST() macro should be replaced with TEST_F() to allow the test to access the fixture's members and functions:

For more information about Google Test, explore the samples in the framework's repository. Also, for more information about other noticeable Google Test features such as value-parametrized tests and type-parameterized tests, refer to Advanced options.

Adding Google Test to your project

Download Google Test from the official repository and extract the contents of googletest-main into an empty folder in your project (for example, Google_tests/lib).

Alternatively, clone Google Test as a git submodule or use CMake to download it (instructions below will not be applicable in the latter case).

Create a CMakeLists.txt file inside the Google_tests folder: right-click it in the project tree and select .

Customize the following lines and add them into your script:

# 'Google_test' is the subproject name project(Google_tests) # 'lib' is the folder with Google Test sources add_subdirectory(lib) include_directories(${gtest_SOURCE_DIR}/include ${gtest_SOURCE_DIR}) # 'Google_Tests_run' is the target name # 'test1.cpp test2.cpp' are source files with tests add_executable(Google_Tests_run test1.cpp test2.cpp) target_link_libraries(Google_Tests_run gtest gtest_main)In your root CMakeLists.txt script, add the

add_subdirectory(Google_tests)command to the end, then reload the project.

When writing tests, make sure to add #include "gtest/gtest.h" at the beginning of every .cpp file with your tests code.

Google Test run/debug configuration

Although Google Test provides the main() entry, and you can run tests as regular applications, we recommend using the dedicated Google Test run/debug configuration. It includes test-related settings and let you benefit from the built-in test runner, which is unavailable if you run tests as regular programs.

To create a Google Test configuration, go to Run | Edit Configurations in the main menu, click

and select Google Test from the list of templates.

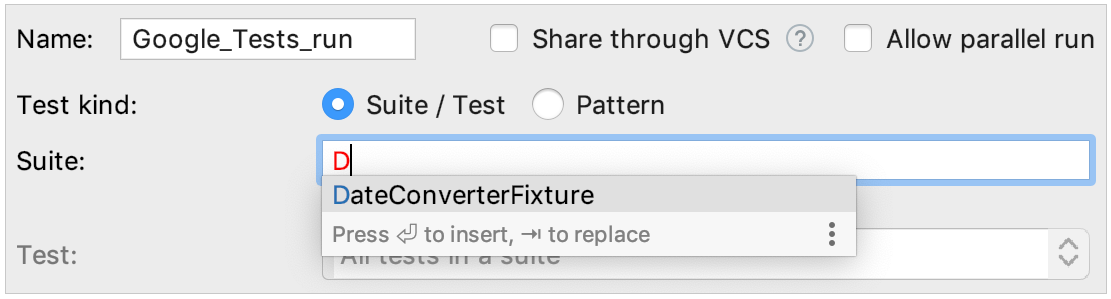

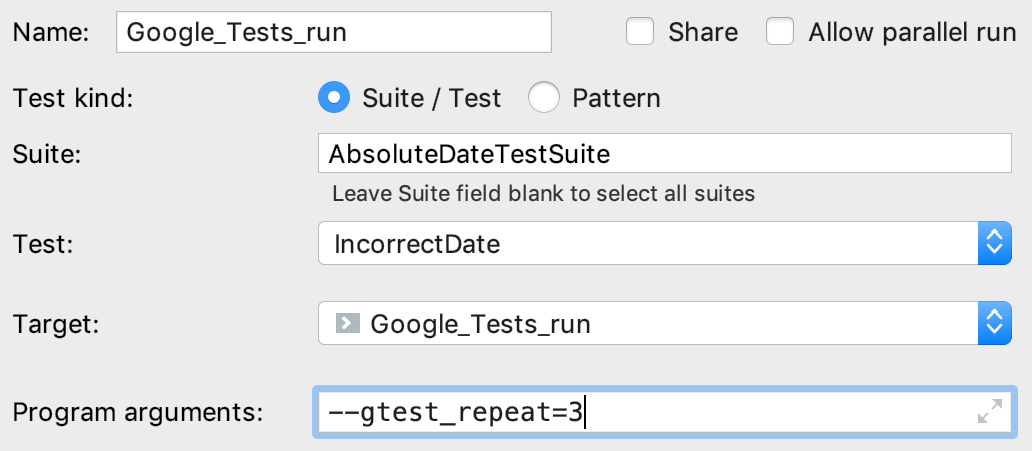

Specify the test or suite to be included in the configuration, or provide a pattern for filtering test names. Auto-completion is available in the fields to help you quickly fill them up:

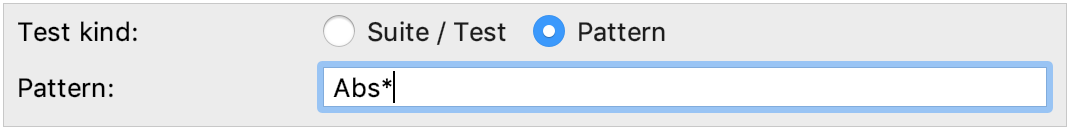

Set wildcards to specify test patterns, for example:

In other fields of the configuration settings, you can set environment variables and command line options. For example, use Program arguments field to pass the

--gtest_repeatflag and run a Google test multiple times:

The output will look as follows:

Repeating all tests (iteration 1) ... Repeating all tests (iteration 2) ... Repeating all tests (iteration 3) ...Save the configuration, and it's ready for Run

or Debug

.

Running tests

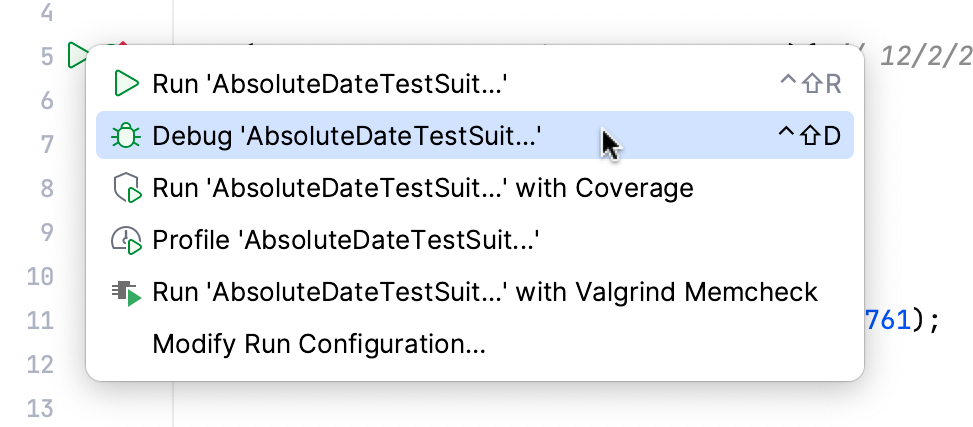

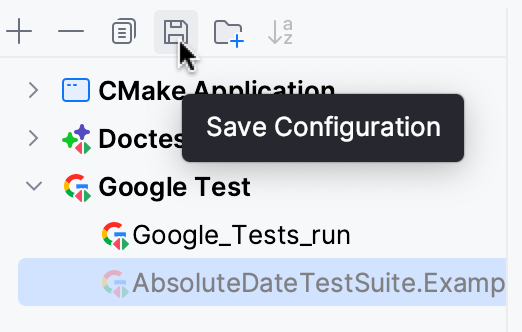

In CLion, there are several ways to start a run/debug session for tests, one of which is using special gutter icons. These icons help quickly run or debug a single test or a whole suite/fixture:

Gutter icons also show test results (when already available): success or failure

.

When you run a test/suite/fixture using gutter icons, CLion creates a temporary Google Test configuration, which is greyed out in the list of configurations. To save a temporary configuration, select it in the dialog and press .

Exploring results

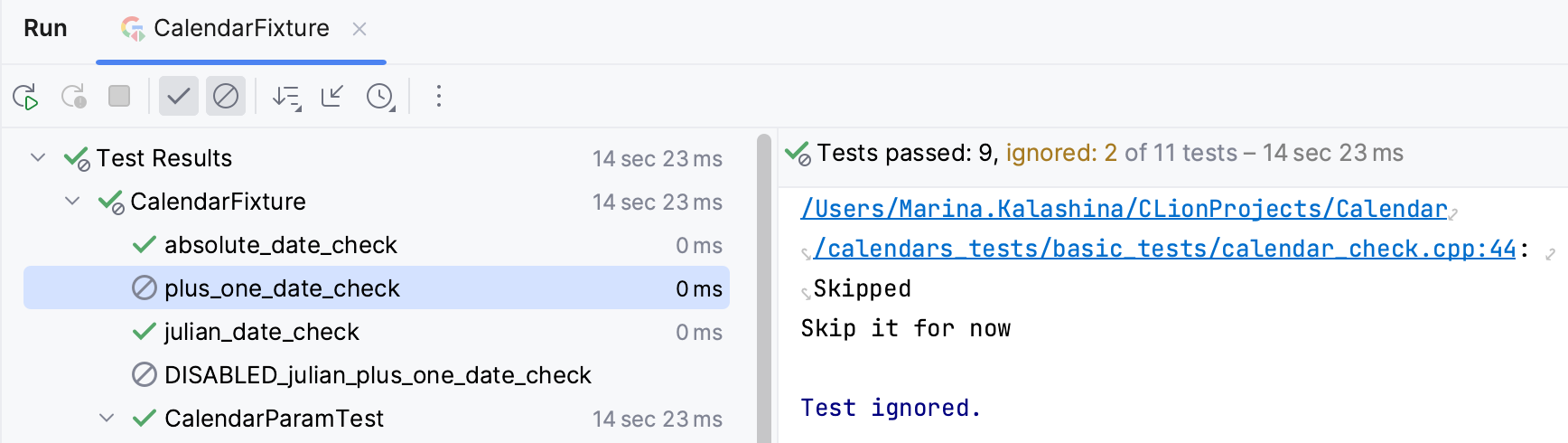

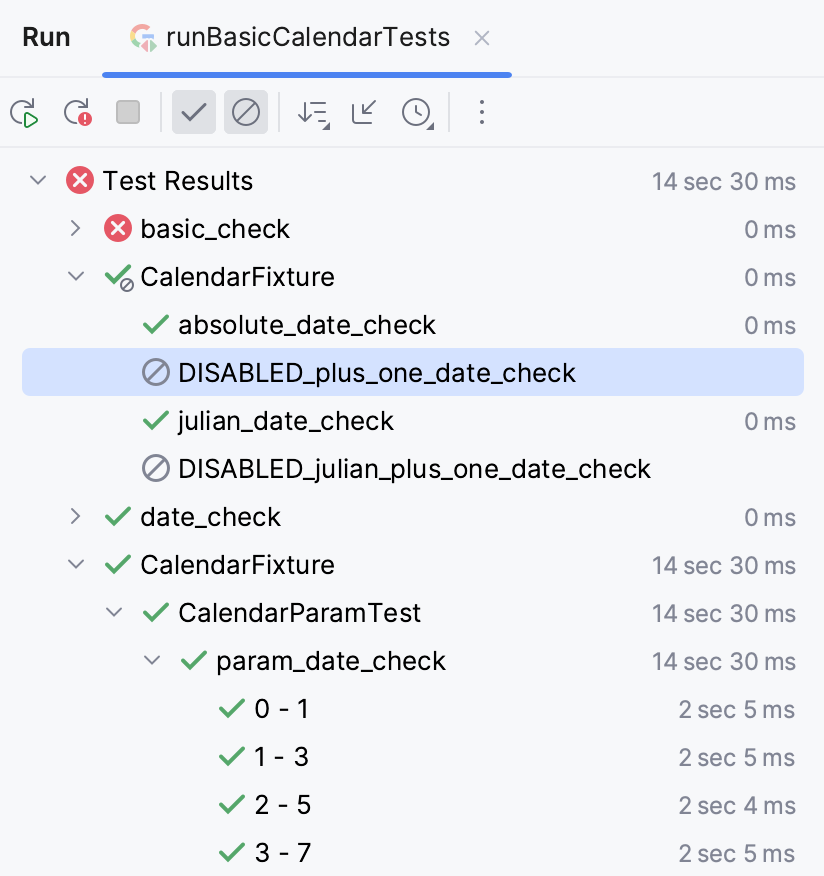

When you run tests, the results (and the process) are shown in the test runner window. This window includes:

progress bar with the percentage of tests executed so far,

tree view of all the running tests with their status and duration,

tests' output stream,

toolbar with the options to rerun failed

tests, export

or open previous results saved automatically

, sort the tests alphabetically

to easily find a particular test, or sort them by duration

to understand which test ran longer than others.

Test tree shows all the tests while they are being executed one by one. For parameterized tests, you will see the parameters in the tree as well. Also, the tree includes disabled tests (those with the DISABLED prefix in their names) and marks them as skipped with the corresponding icon.

Skipping tests at runtime

You can configure some tests to be skipped based on a condition evaluated at runtime. For this, use the GTEST_SKIP() macro.

Add the conditional statement and the GTEST_SKIP() macro to the test you want to skip:

Use the Show ignored icon to view/hide skipped tests in the Test Runner tree: